Disinformation is one of the key challenges of our times. Sometimes it may seem that it is everywhere around us. From the family gatherings where heated discussions on politics, society and even personal health choices take place, to internet, social media and even international politics.

It is not just individuals on the internet who are creating and spreading disinformation now and then. Foreign states, particularly Russia and China, have systematically used disinformation and information manipulation to sow division within our societies and to undermine our democracies, by eroding trust in the rule of law, elected institutions, democratic values and media. Disinformation as part of foreign information manipulation and interference poses a security threat affecting the safety of the European Union and its Member States.

What is disinformation exactly? How can we avoid falling for it, if at all? How can we respond to it? The Learn platform aims to help you find answers to these and other topical questions based on EUvsDisinfo’s collective experience gained since its creation in 2015. Here, you will find some of our best texts and a selection of useful tools, games, podcasts and other resources to build or strengthen your resilience to disinformation. Learn to discern with EUvsDisinfo, #DontBeDeceived and become more resilient.

Define

Fake news

Inaccurate, sensationalist, misleading information. The term “fake news” has strong political connotations and is woefully inaccurate to describe the complexity of the issues at stake. Hence, at EUvsDisinfo we prefer more precise definitions of the phenomenon (e.g. disinformation, information manipulation).

Propaganda

Content disseminated to further one's cause or to damage an opposing cause, often using unethical persuasion techniques. This is a catch-all term with strong historical connotations, thus we rarely use it in our work. Notably, the International Covenant on Civil and Political Rights, adopted by the UN in 1966 states that propaganda for war shall be prohibited by law.

Misinformation

False or misleading content shared without intent to cause harm. However, its effects can still be harmful, e.g. when people share false information with friends and family in good faith.

Disinformation

False or misleading content that is created, presented and disseminated with an intention to deceive or secure economic or political gain and which may cause public harm. Disinformation does not include errors, satire and parody, or clearly identified partisan news and commentary.

Information influence operation

Coordinated efforts by domestic or foreign actors to influence a target audience using a range of deceptive means, including suppressing independent information sources in combination with disinformation.

Foreign information manipulation and interference (FIMI)

A pattern of behaviour in the information domain that threatens values, procedures and political processes. Such activity is manipulative (though usually not illegal), conducted in an intentional and coordinated manner, often in relation to other hybrid activities. It can be pursued by state or non-state actors and their proxies.

Understand

What is Disinformation?

And why should you care?

Some would say that disinformation, or lying, is a part of human interaction. White lies, blatant lies, falsifications, “alternative facts”; propaganda has followed humankind throughout our history. Even the snake in the garden of Eden lied to Adam and Eve!

Others would add that disinformation, especially used for political or geopolitical purposes, is a much more recent invention that became widely used by the totalitarian regimes of the 20th century. And that it was perfected by the KGB - the Soviet Union’s main security agency - which developed so-called “active measures”[1] to sow division and confusion in attempts to undermine the West. And that disinformation continues to be used by Russia for the same purpose to this day. (You can learn more about how Russia has revitalised KGB disinformation methods in our 2019 interview with independent Russian journalist Roman Dobrokhotov.)

There are many ways to answer the question of what disinformation is, and at EUvsDisinfo we have considered its philosophical, technological, political, sociological and communications aspects. We have tried to cover them all in this LEARN section.

Our own story began in 2015, after the European Council, the highest level of decision-making in the European Union, called out Russia as a source of disinformation, and tasked us with challenging Russia’s ongoing disinformation campaigns. Read our story here. In 2014 – the year before EUvsDisinfo was set up – a European country had, for the first time since World War II, used military force to attack and take land from a neighbour: Russia illegally annexed the Ukrainian peninsula of Crimea. Russia’s aggression in Ukraine was accompanied by an overwhelming disinformation campaign, culminating in an all-out invasion and large-scale genocidal violence against Ukraine. Countering Russian disinformation means fighting Russian aggression – as told by Ukrainian fact-checkers Vox Check, who talked to us quite literally from the battlefield trenches where they continue to defend Ukraine.

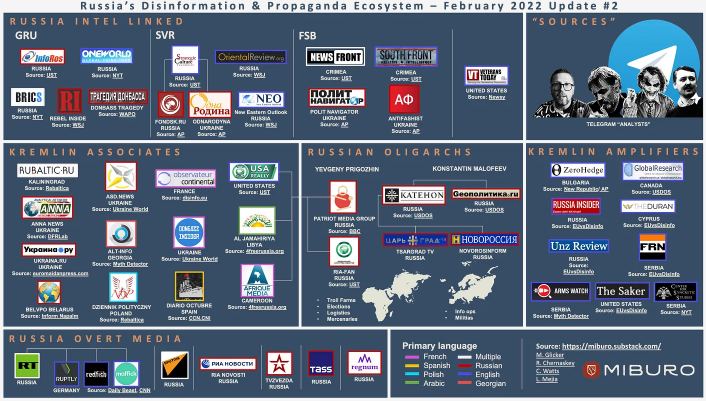

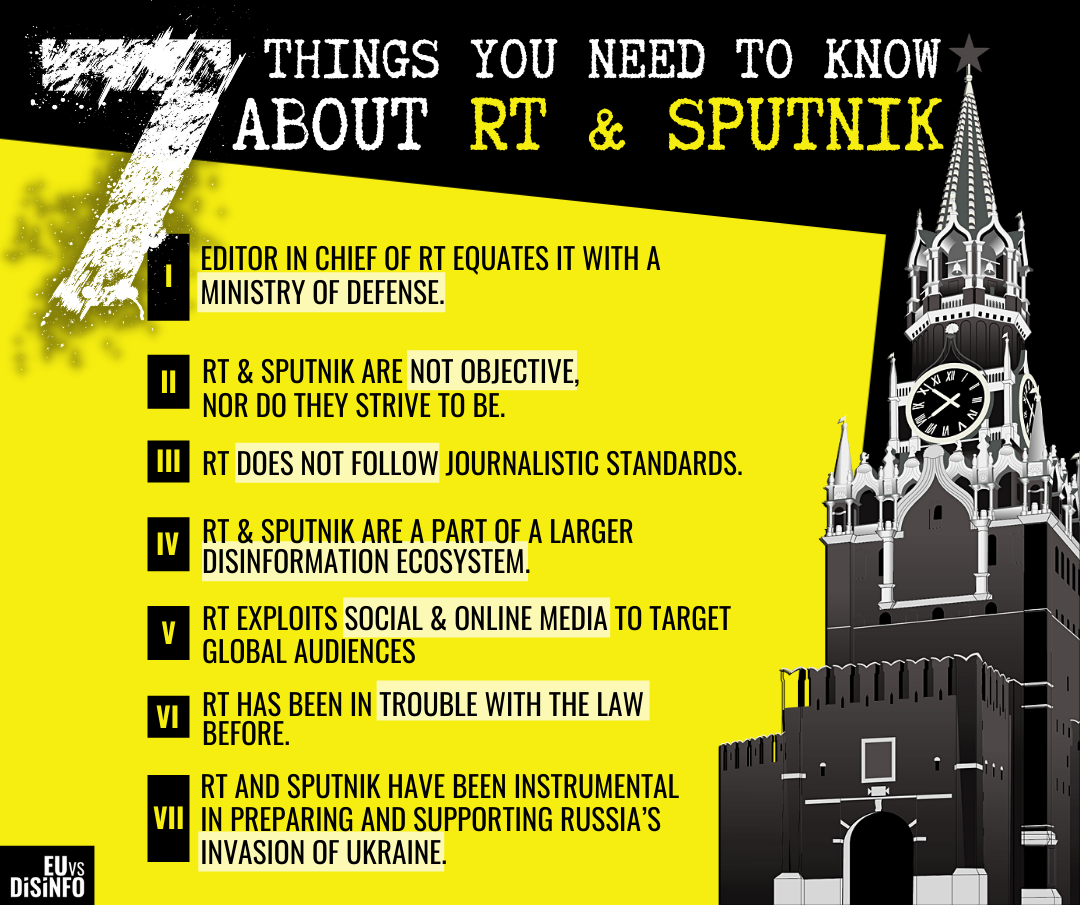

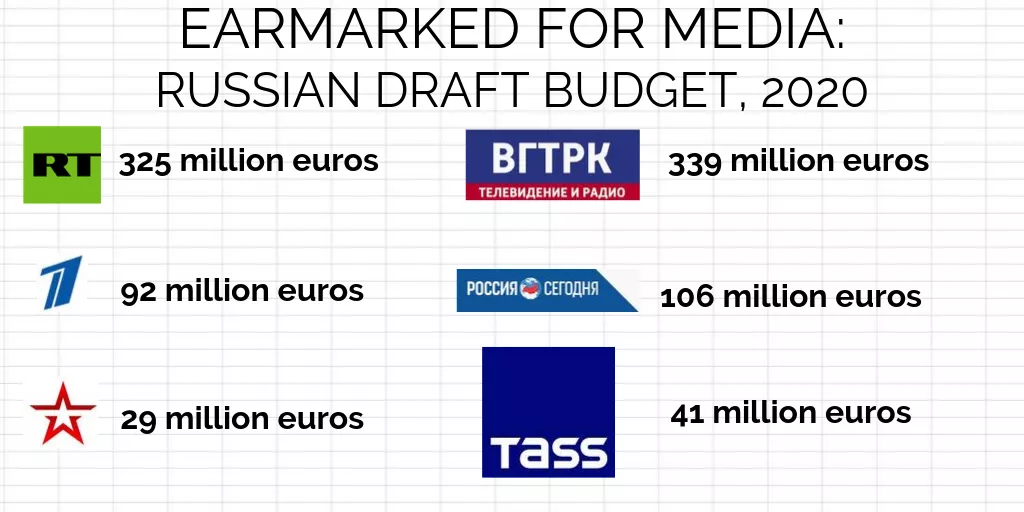

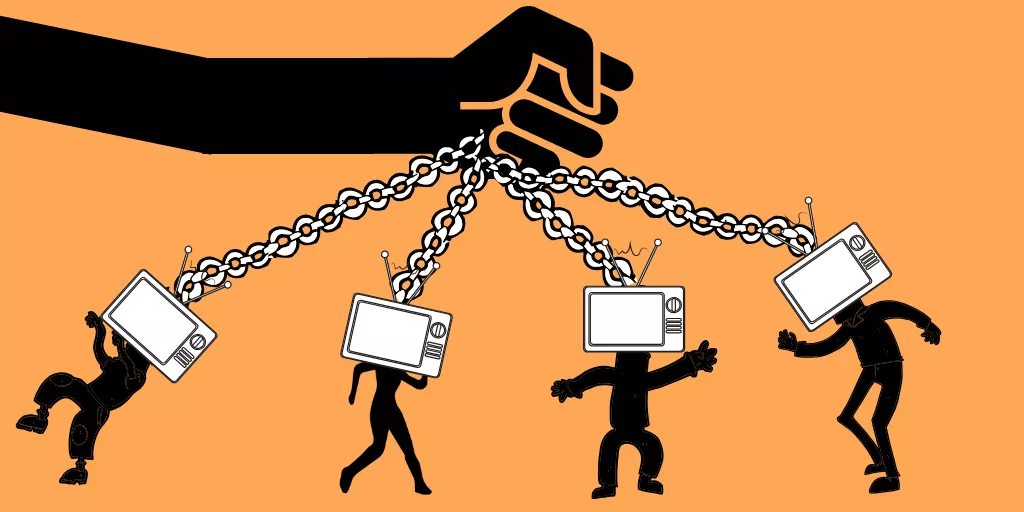

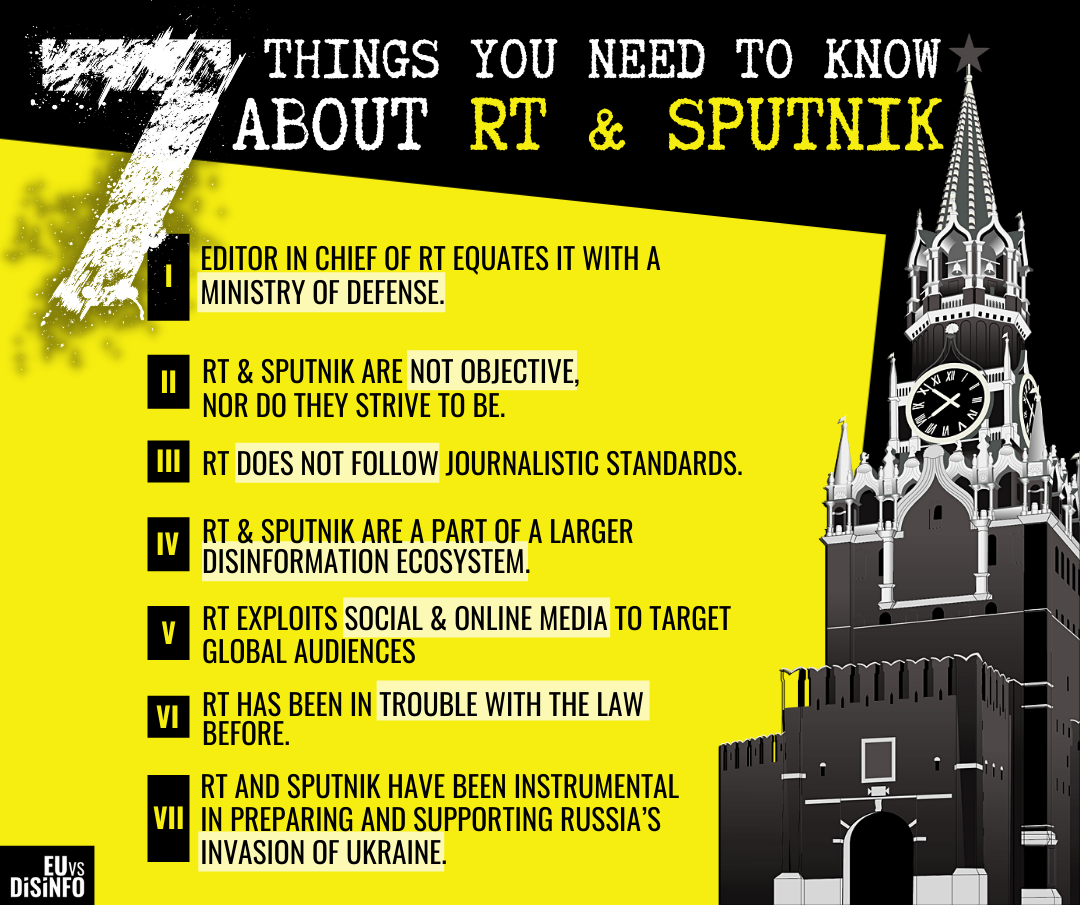

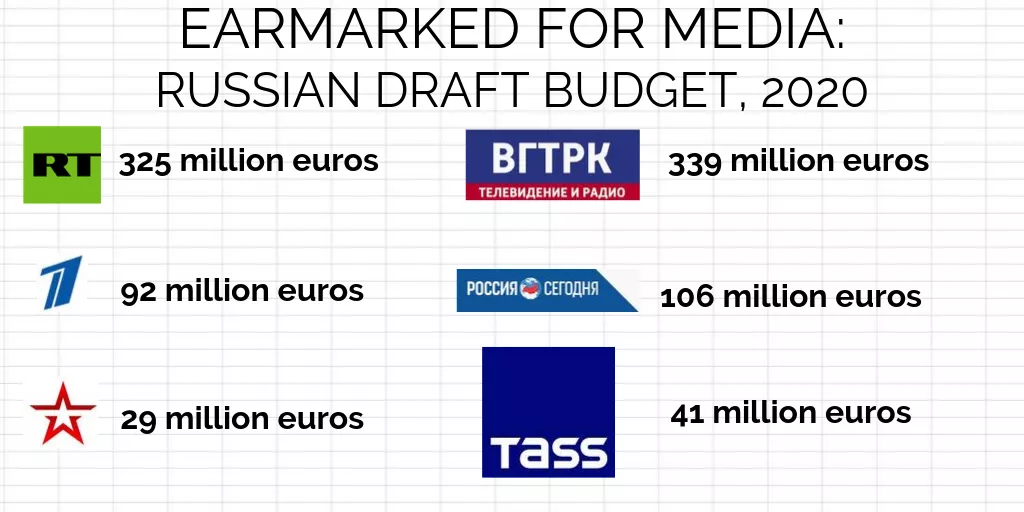

It is hard to overstate the role of Russian state-controlled media and the wider pro-Kremlin disinformation ecosystem in mobilising domestic support for the invasion of Ukraine.[2] The Kremlin’s grip on the information space in Russia is also an illustration of how authoritarian regimes use state-controlled media as a Tribune, platform to disseminate instructions to their subjects on how to act and what to think, demanding unconditional loyalty from the audience. This stands in sharp contrast to the understanding of media as a forum where a free exchange of views and ideas takes place; where debates, scrutiny and criticism create public discourse that sustains democracies. (We explore these concepts in our text on propaganda and disempowerment.)

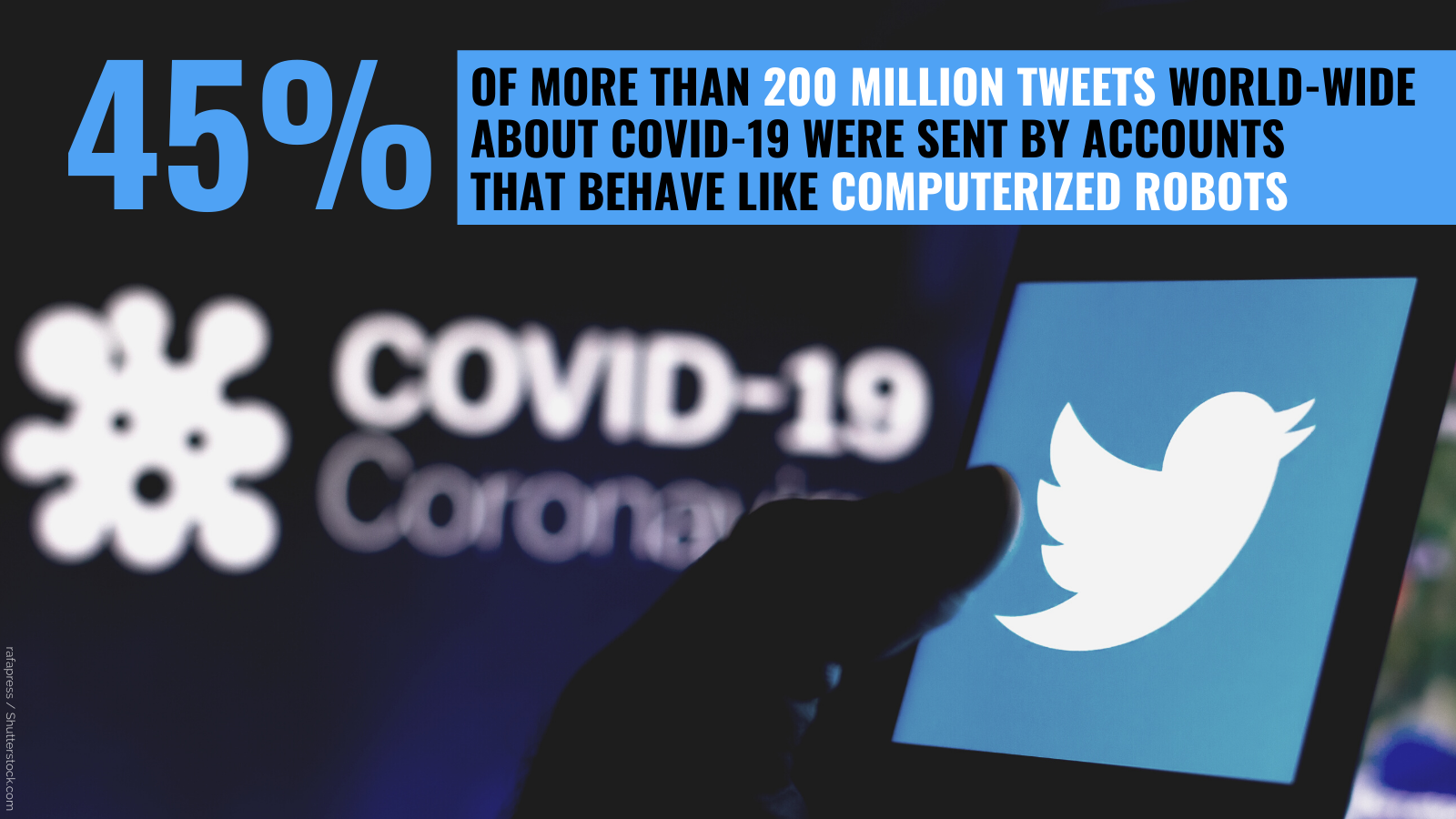

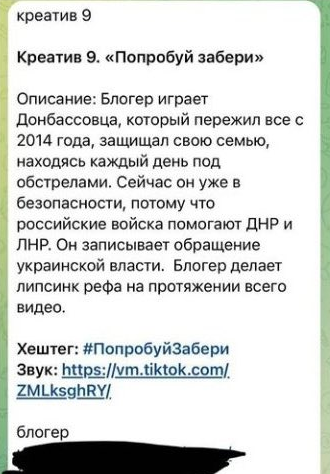

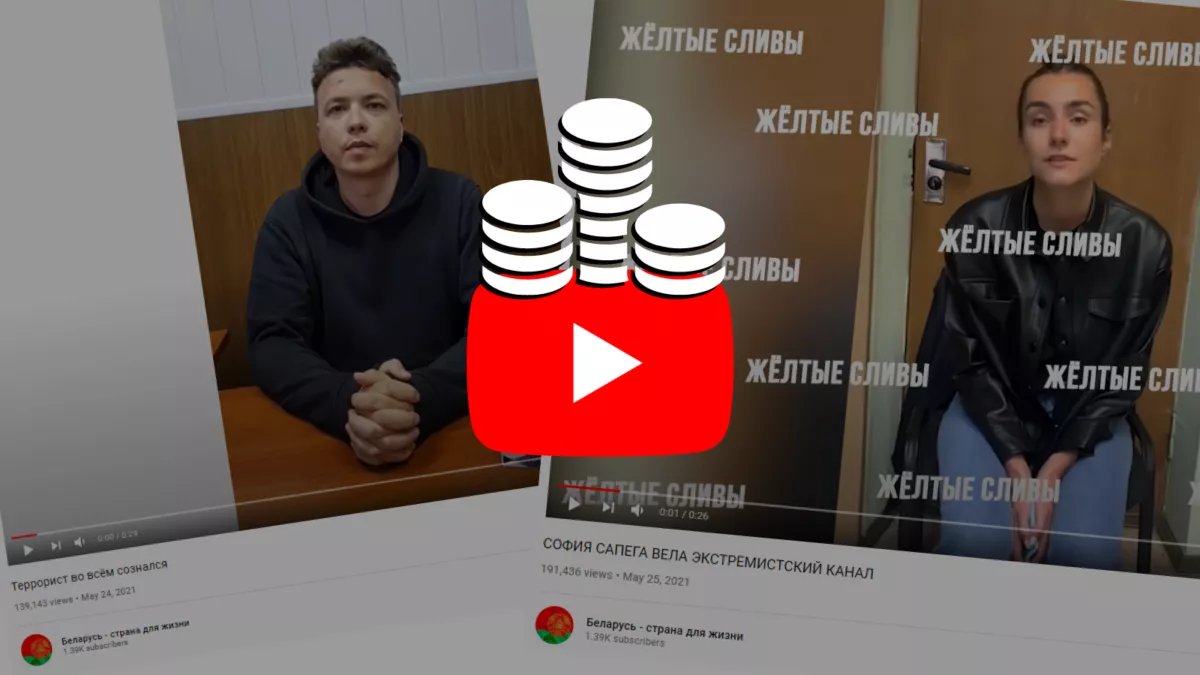

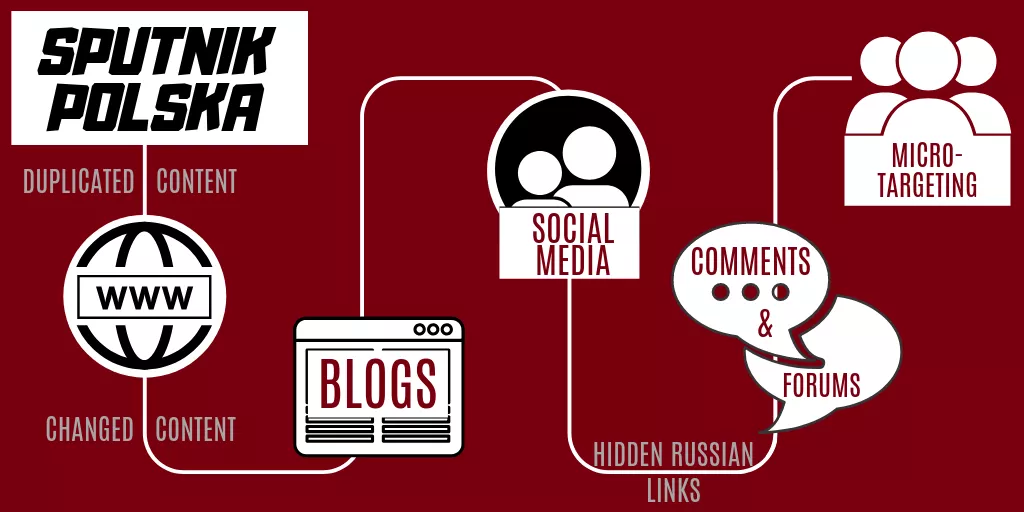

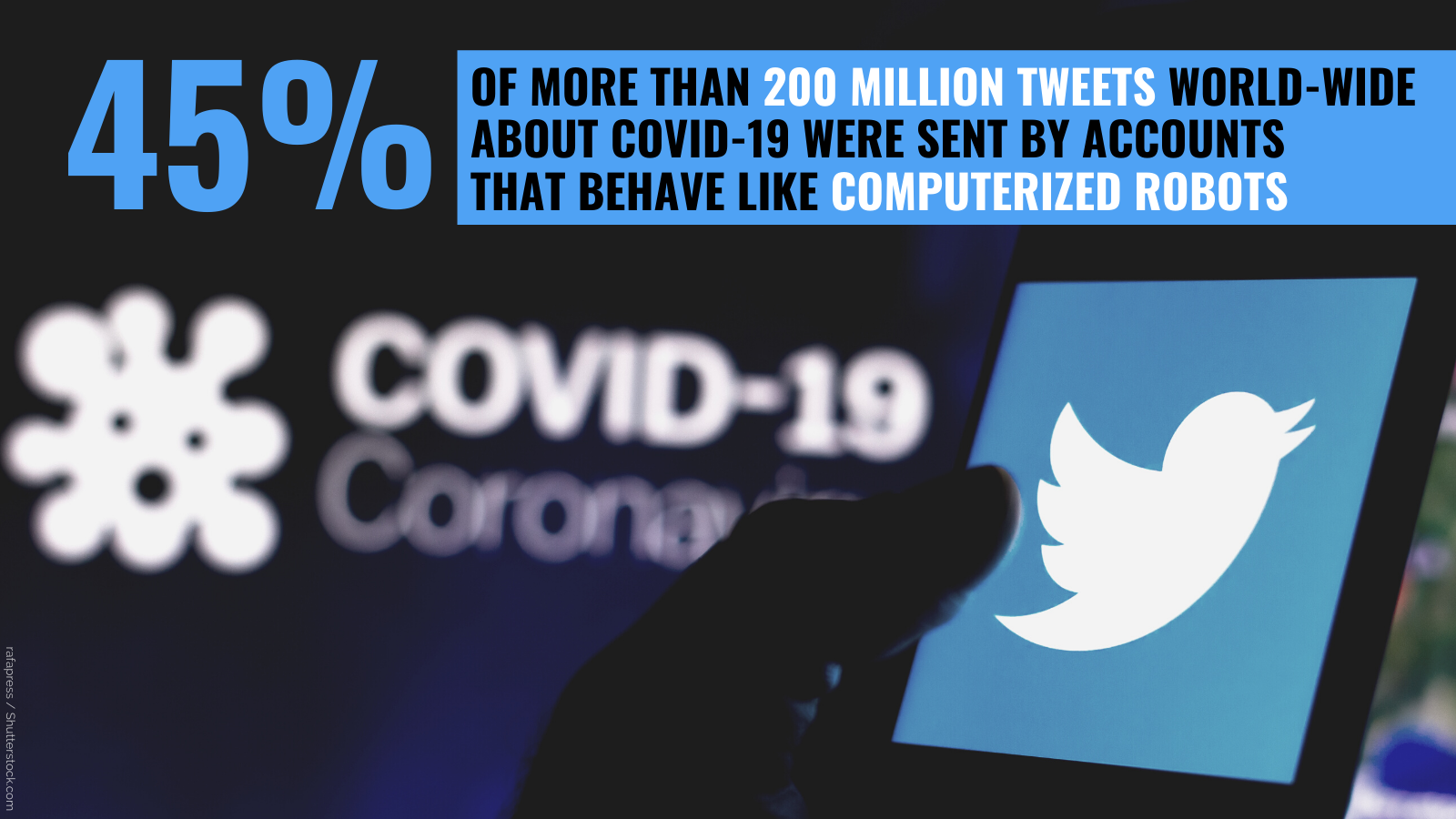

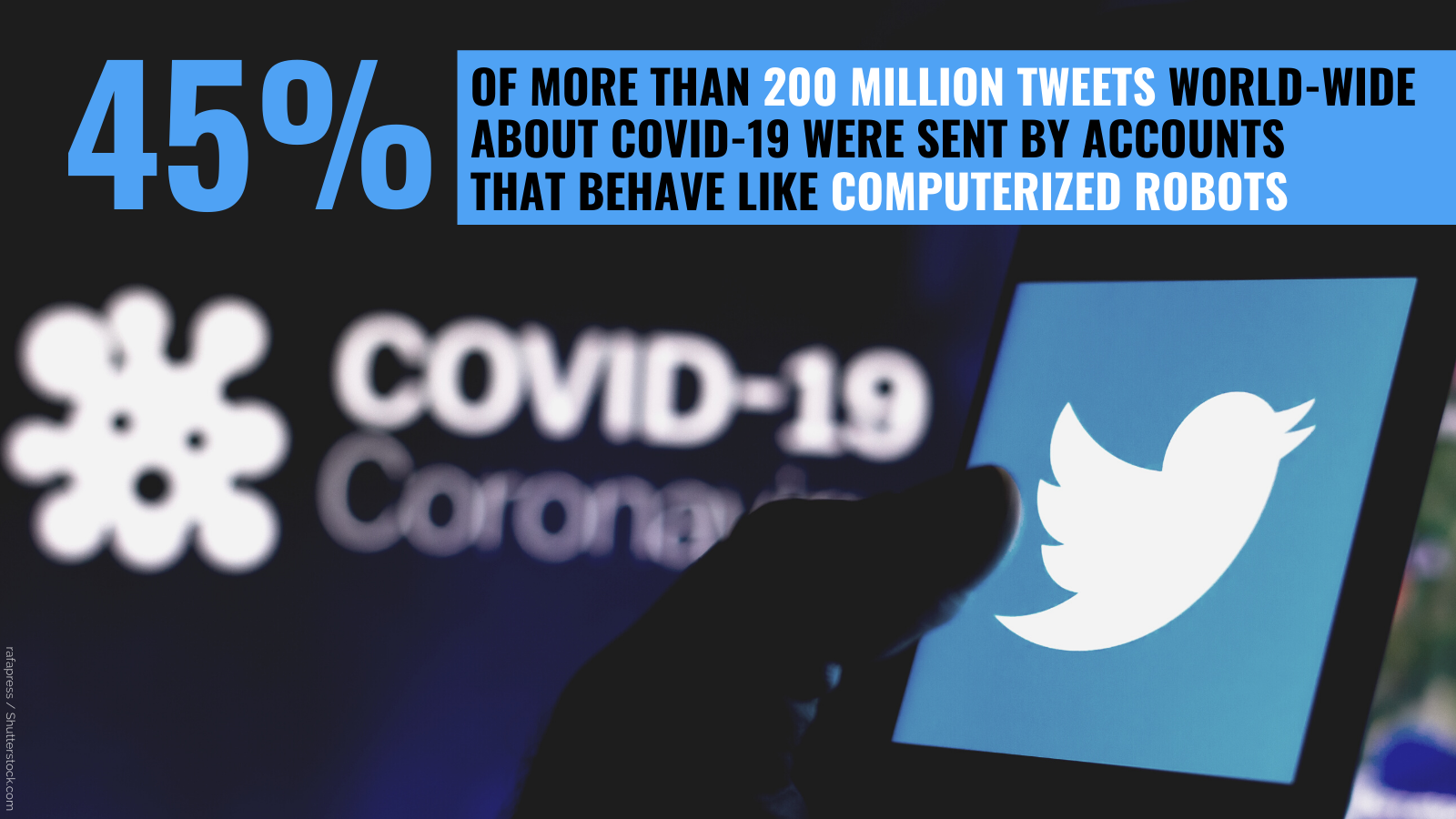

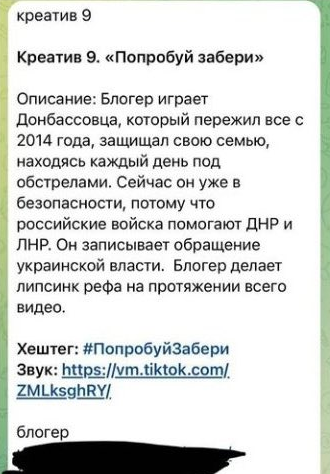

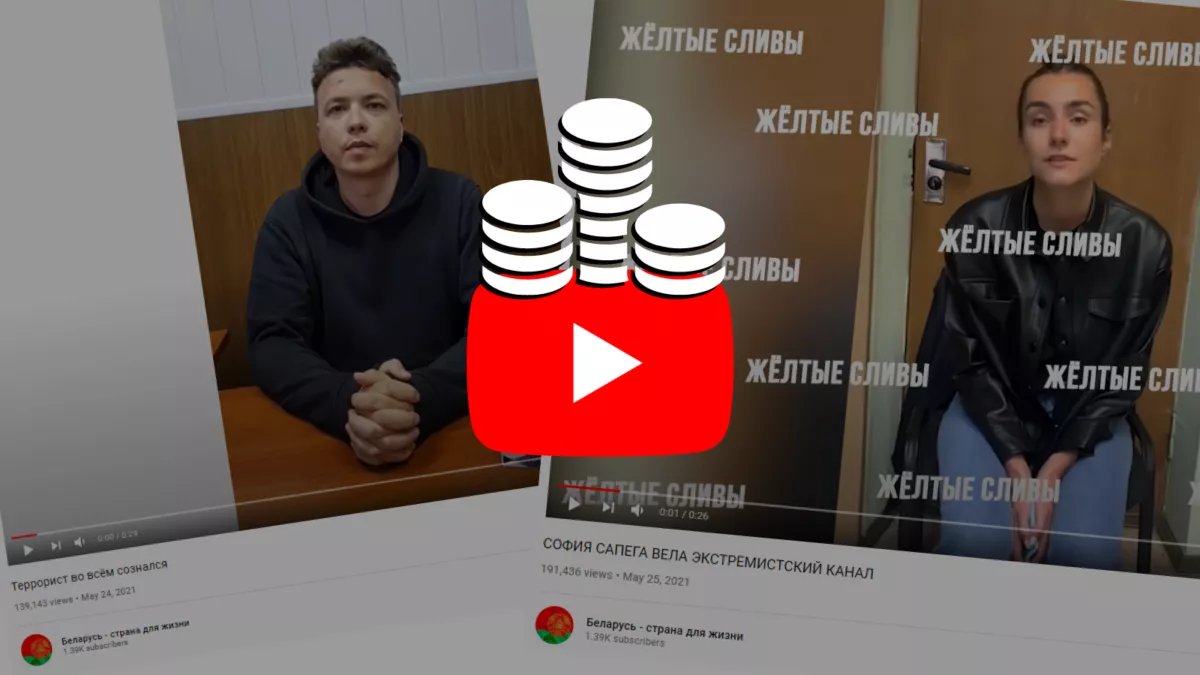

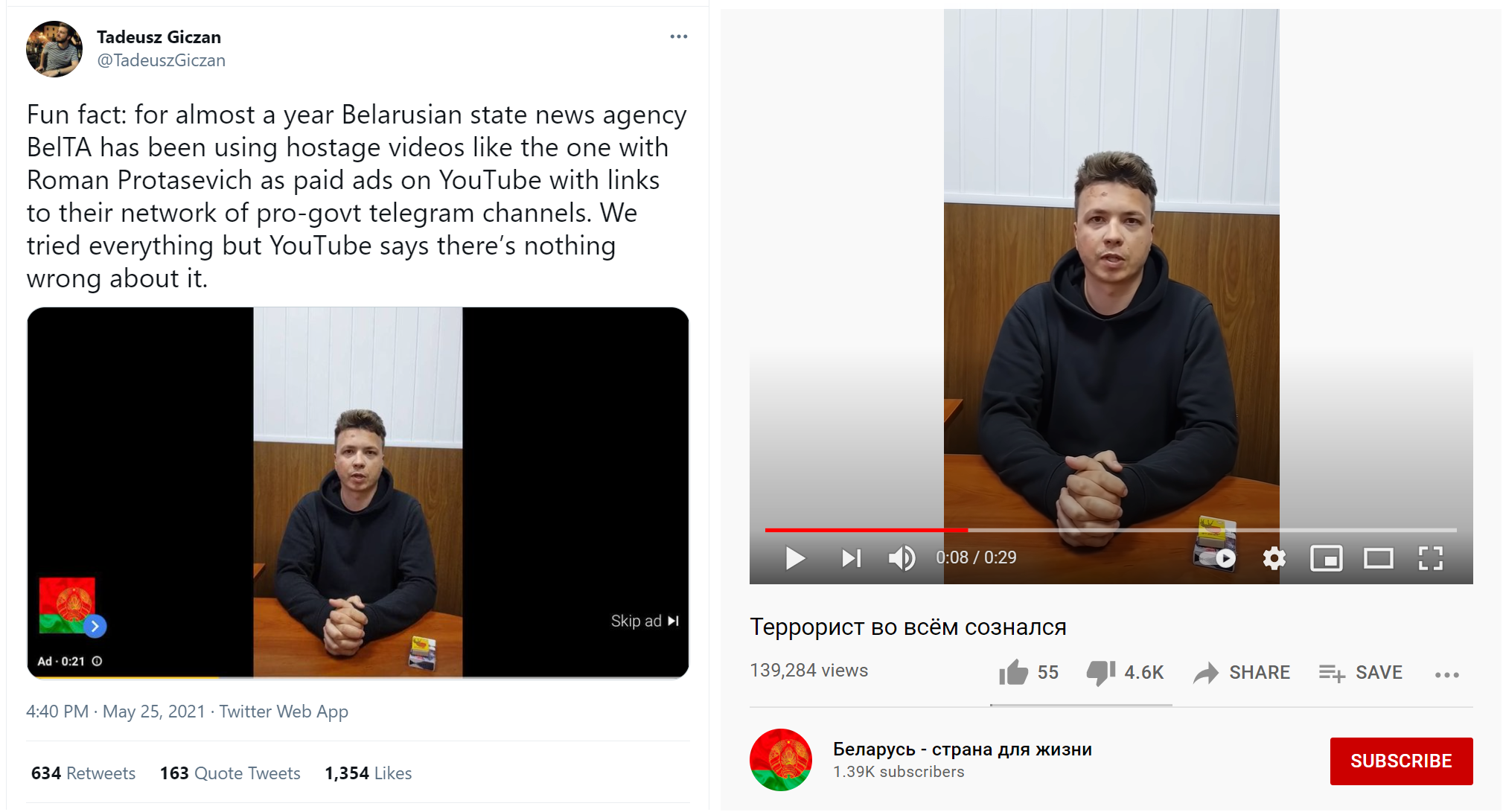

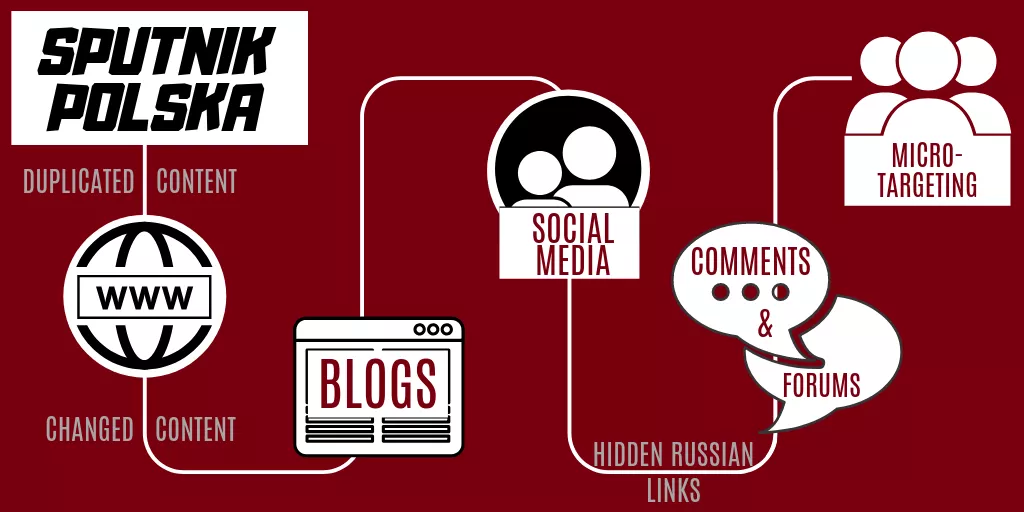

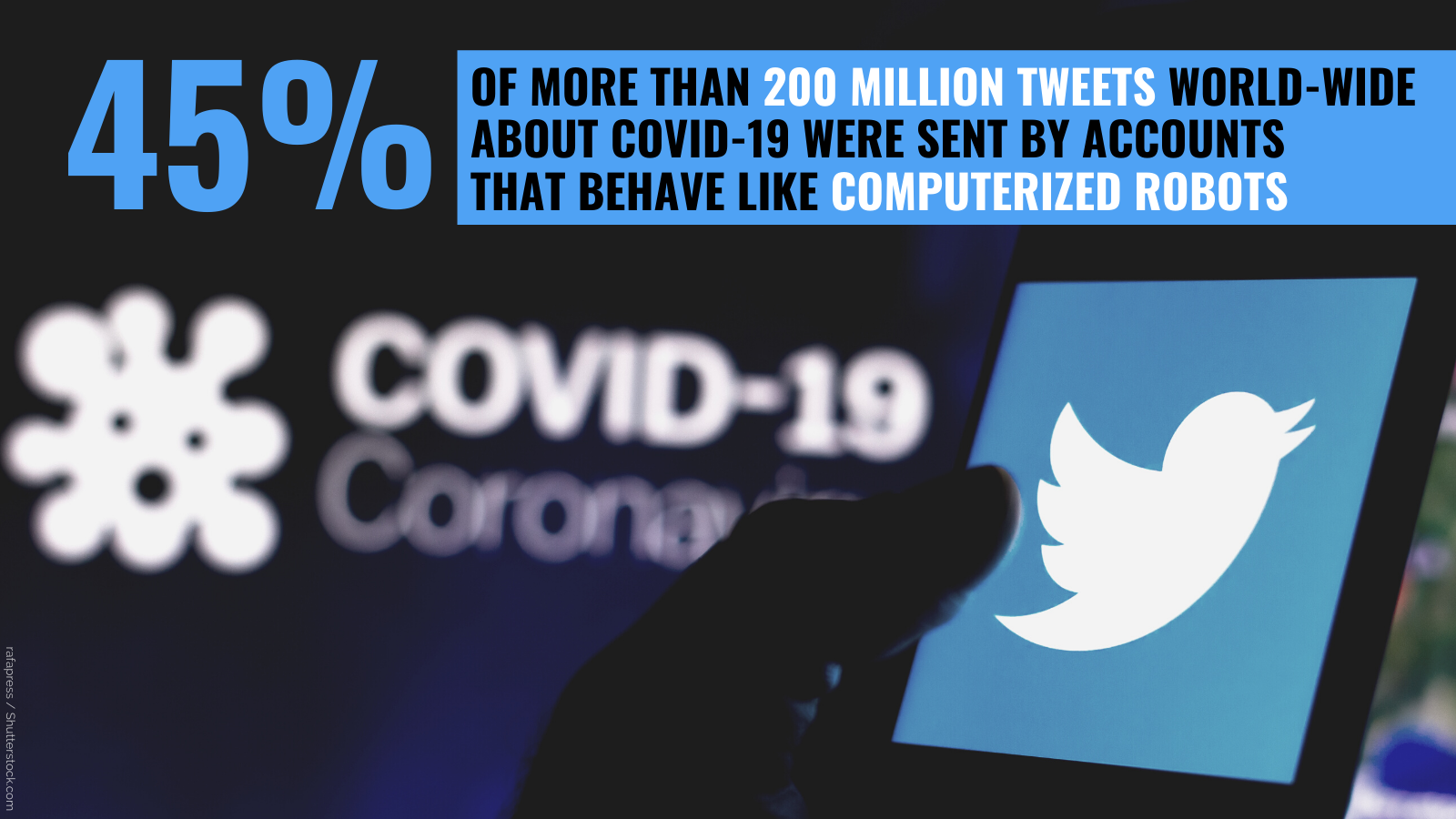

Just like the use of media as a Tribune, pro-Kremlin disinformation is supported by a megaphone – the megaphone of manipulative tactics. The use of bots, trolls, fake websites and fake experts and many more activities trying to distort the genuine discussions we need for a democratic debate, is designed to reach as many people as possible to make them feel uncertain, afraid and to instil hatred in them. This shows that it is not a matter of free speech. The right to say false or misleading things is protected in our societies. This, however, is a matter of the Kremlin using all this manipulation as a way to be louder than everyone else. Such information manipulation and interference, including disinformation, is what EUvsDisinfo wants to expose, explain and counter.

Disinformation and other information manipulation efforts, which we also cover in LEARN, attempt to poison such public discourse. Thus, countering disinformation also means defending democracy and standing up against authoritarianism.

Scroll through this section and make sure to check the others, to learn more about the Narratives and Rhetoric of pro-Kremlin disinformation; Disinformation Tactics, Techniques and Procedures; the Pro-Kremlin Media Ecosystem; and Philosophy and Disinformation. Check out the Respond section to learn what you can do it about it. And if you are still curious – we have something special for you too!

[1] The New York Times made an excellent documentary on this back in 2018, called “Operation InfeKtion”, (available in English).

[2] It is for this reason that the EU has sanctioned several dozen Russian propagandists and suspended the broadcasting of Russia state-controlled outlets such as RT on the territory of the EU.

“To Challenge Russia’s Ongoing Disinformation Campaigns”: The Story of EUvsDisinfo

With this article, we invite our readers on a tour behind the scenes of the output you see on the EUvsDisinfo website, including the public disinformation database; our presence on Facebook and Twitter and our weekly newsletter, the Disinformation Review.

We go through some key moments of our history in changing contexts; from the 2015 decision to set up the East StratCom Task Force in the light of the conflict in Ukraine to the reality of the COVID-19 infodemic in the spring of 2020. As a part of this story, we share some of the thinking behind our methods and approaches.

A unique mandate

On 19 and 20 of March 2015, the leaders of the 28 EU countries gathered in Brussels. One of the decisions the participants in this summit put down on paper was the following:

“The European Council stressed the need to challenge Russia's ongoing disinformation campaigns and invited the High Representative, in cooperation with Member States and EU institutions, to prepare by June an action plan on strategic communication. The establishment of a communication team is a first step in this regard.”

With a unanimous decision coming from the highest level of decision making in the European Union – 28 heads of state and government – and with the clear language in which Russia was called out as a source of disinformation, the future work of what became the East Stratcom Task Force had been given a unique and strong mandate.

In March 2015, the leaders of the 28 EU member states decided to set up the East Stratcom Task Force.

In March 2015, the leaders of the 28 EU member states decided to set up the East Stratcom Task Force.

A team of experts, who mainly had their background in communications, journalism and Russian studies, was formed in the EEAS – the EU’s diplomatic service, which is led by the EU’s High Representative.

2015: Russian aggression in Ukraine

Before looking into how this mandate was turned into practice, let us recall the situation in the eastern part of the European continent at that time.

In 2014 – the year before the team was set up – a European country had for the first time since World War 2 used military force to attack and take land from a neighbour: Russia’s illegal annexation of the Ukrainian peninsula of Crimea.

Fog of falsehood: From the "little green men" in Crimea to the killing of 298 innocent civilians in the sky over Ukraine in July 2014, Russian authorities and state-controlled media cooperated to spread confusion and hide the truth.

Fog of falsehood: From the "little green men" in Crimea to the killing of 298 innocent civilians in the sky over Ukraine in July 2014, Russian authorities and state-controlled media cooperated to spread confusion and hide the truth.

Russia-backed armed separatist groups had also taken control over a part of eastern Ukraine in the Donbas region, which borders with Russia. In July 2014, this conflict suddenly moved closer to the European Union itself when Malaysia Airlines Flight MH17 with 298 people on board – among them 80 children and 196 Dutch nationals – was shot down by a Russian missile launched from the part of Ukraine controlled by Russia-backed separatists.

Ukraine and EU as targets of disinformation

Russia’s aggression in Ukraine had been accompanied by an overwhelming disinformation campaign, in which outright lies played a central role. This hybrid operation was integrated into the overall attempt to destabilise Ukraine, and its aim was to undermine Ukraine’s position – both directly in the conflict with Russia and in the eyes of the international community, sowing doubt and confusion.

Russian state-controlled TV showed a woman who claimed to be an eyewitness of Ukrainian forces crucifying a local boy in eastern Ukraine; but the woman turned out to be an actress and the execution had never happened. In another case, Russian audiences were told about a little girl who had been killed as a result of Ukrainian shelling, also in eastern Ukraine; but a BBC journalist managed to make producers from Russia’s NTV admit that they had reported this story, knowing that it was not true. Russian government representatives and Russian media spread dozens of different, contradictory stories about what had happened to Flight MH17 – a smokescreen meant to spread uncertainty and avoid accepting Russia’s responsibility for this horrendous crime.

https://youtu.be/TNKsLlK52ss

Reporters on the ground and investigative journalists have played a key role in exposing pro-Kremlin disinformation. Above an example of Vice News' award-winning video reporting from Crimea.

At the same time, the EU and its relationship with Ukraine – the largest partner country in the EU’s Eastern Partnership policy – was targeted by disinformation. Among the examples was reporting which accused the EU of financing the construction of “concentration camps” in Ukraine. Similar examples of pro-Kremlin disinformation targeting the relationship between the EU and Ukraine were exposed in the important work of Ukrainian fact-checkers.

This was the geopolitical situation and the information environment which in March 2015 made European leaders take this first political step against disinformation.

Three responses to disinformation

In order to move into action, the formulation of the mandate needed to be translated into concrete work descriptions: What should be understood by the phrase “to challenge”? How can you challenge disinformation? In other words: What should the team be doing?

In consultation with international experts, the EEAS identified not one, but three different strands of work as both politically acceptable and effective means of challenging disinformation:

- The Task Force should make the EU’s own communication more effective, with special focus on the Eastern Partnership countries. In other words, if audiences should be resilient to e.g. the disinformation targeting the relationship between EU and Ukraine, it would make sense to raise the level of knowledge about what the EU is and what it does.

- The Task Force should also help to strengthen free and independent media in the same region. One of the best ways of keeping a society resilient to disinformation is to have strong and trusted, independent outlets – including public service media – upholding fundamental journalistic standards.

- Finally, as its third strand of work, the Task Force should raise awareness of the disinformation problem by running an advocacy campaign. This campaign should collect examples of disinformation and exhibit them in a framing that would not reinforce, but instead challenge the disinformation. EUvsDisinfo is this awareness raising campaign.

Since the East Stratcom Task Force began its operations in Brussels on 1 September 2015, these three directions have formed our work.

In other words, what we publish under the brand EUvsDisinfo is one of the EU’s responses to disinformation – an answer to the fundamental question: How to challenge disinformation?

The EUvsDisinfo awareness raising campaign

The EUvsDisinfo.eu website is the hub of our campaign to raise awareness of pro-Kremlin disinformation. The campaign website includes a number of different products:

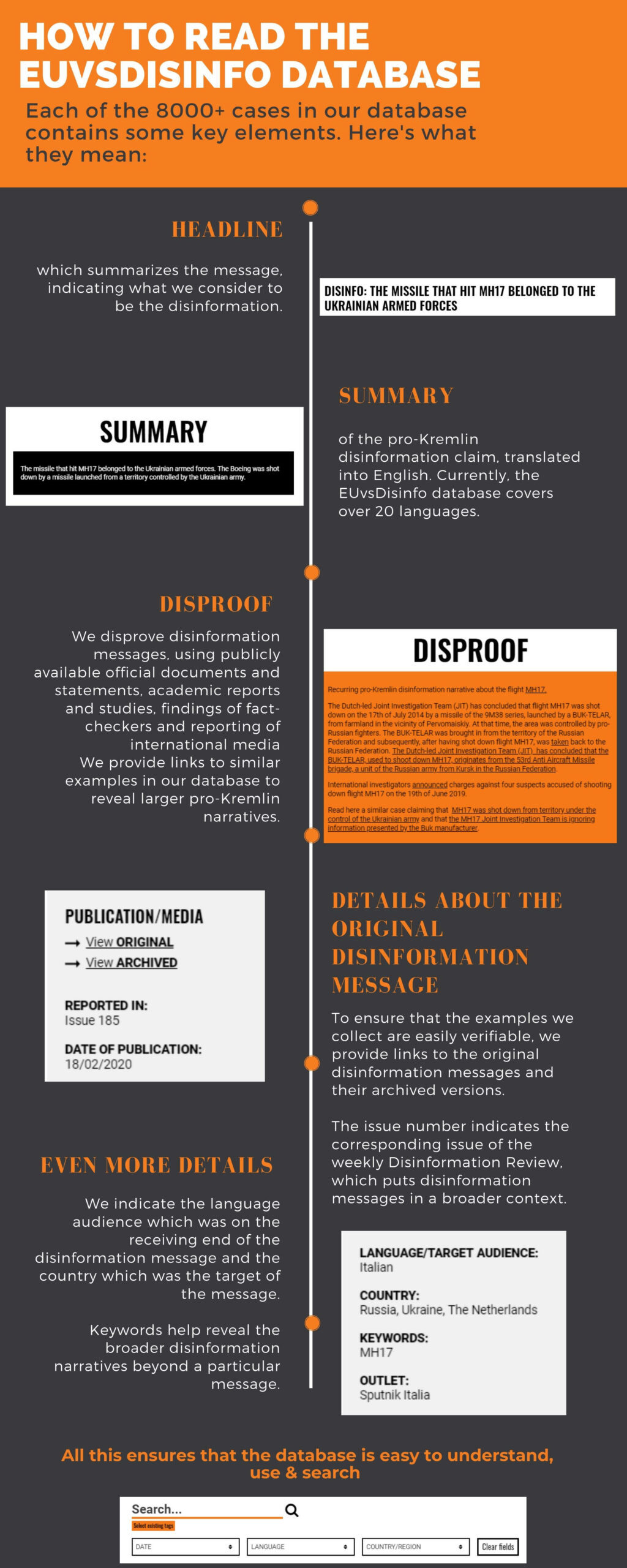

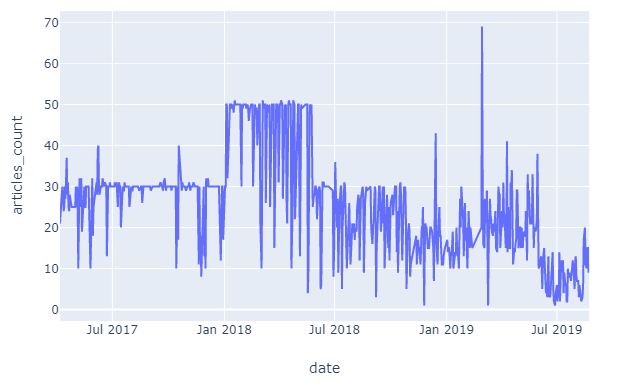

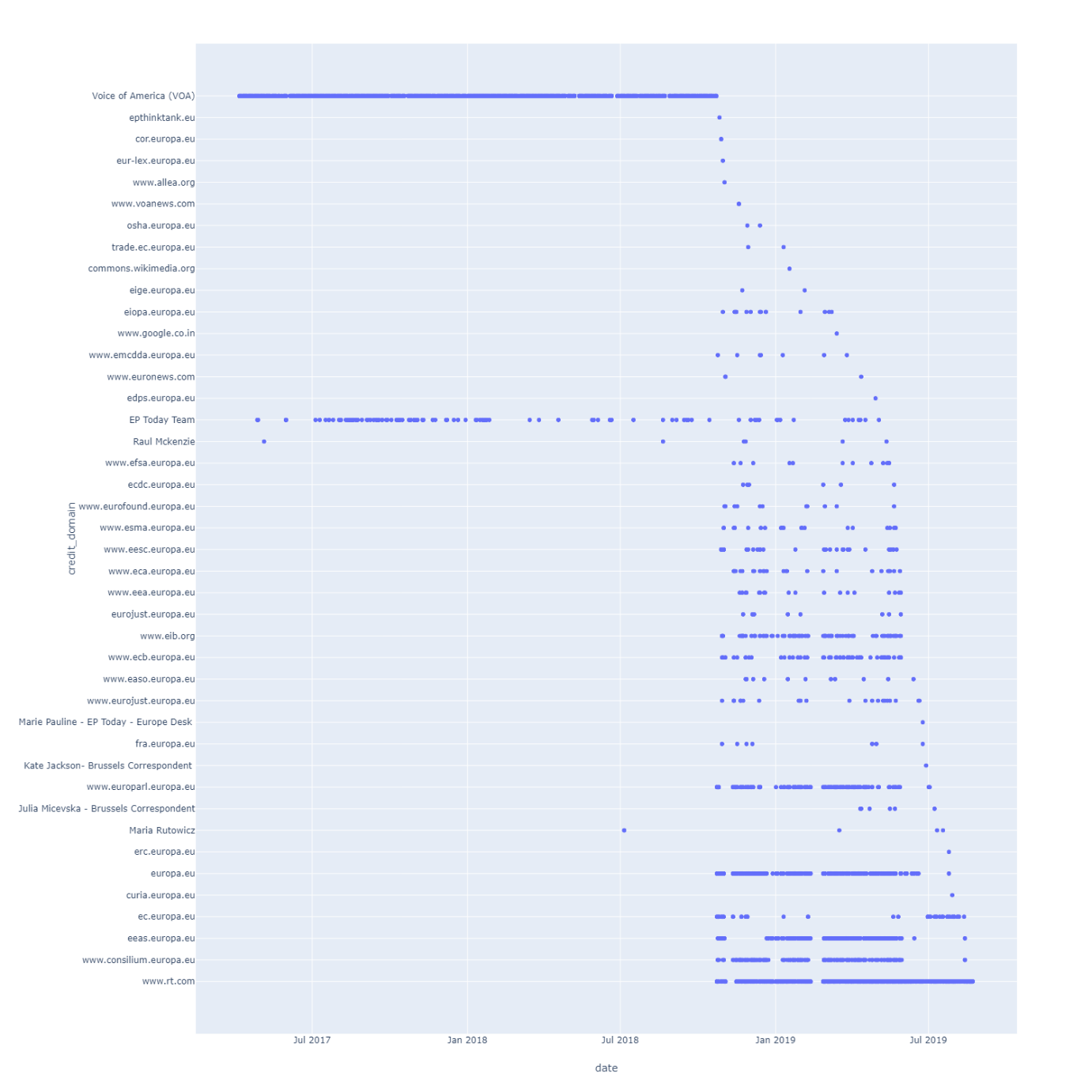

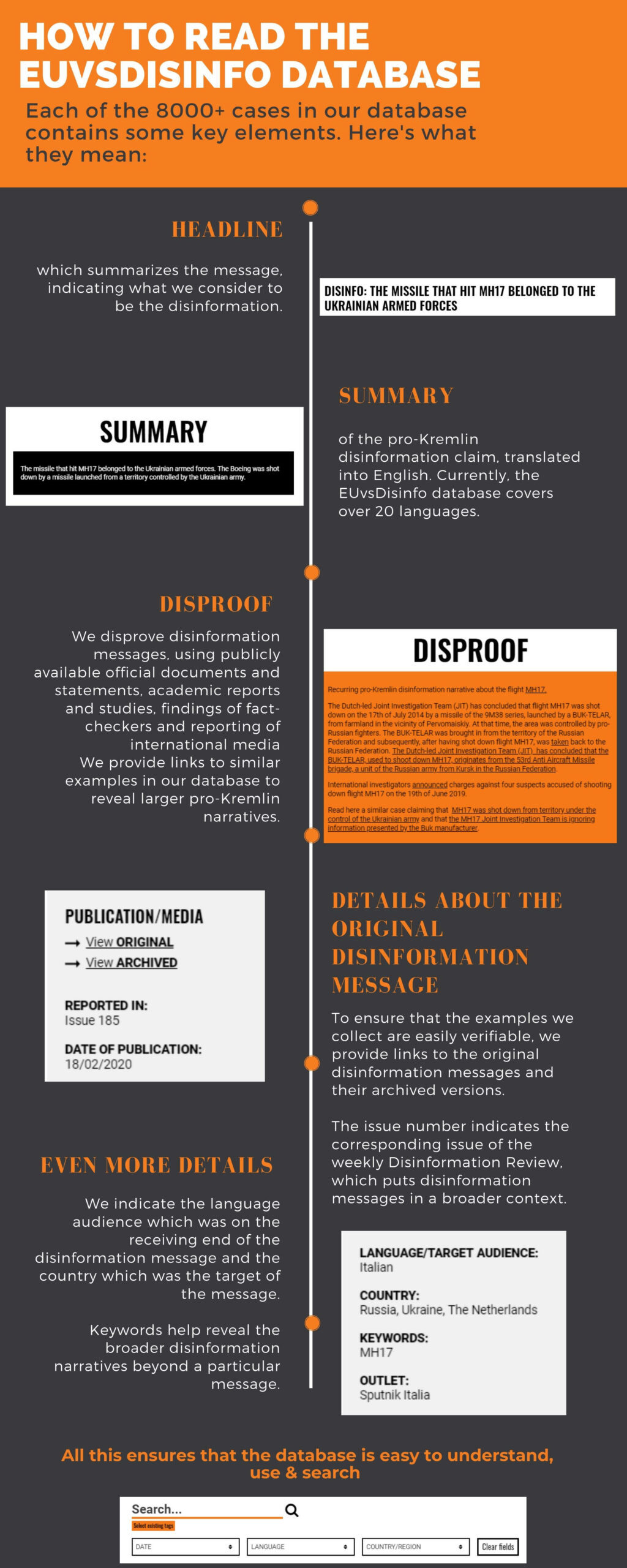

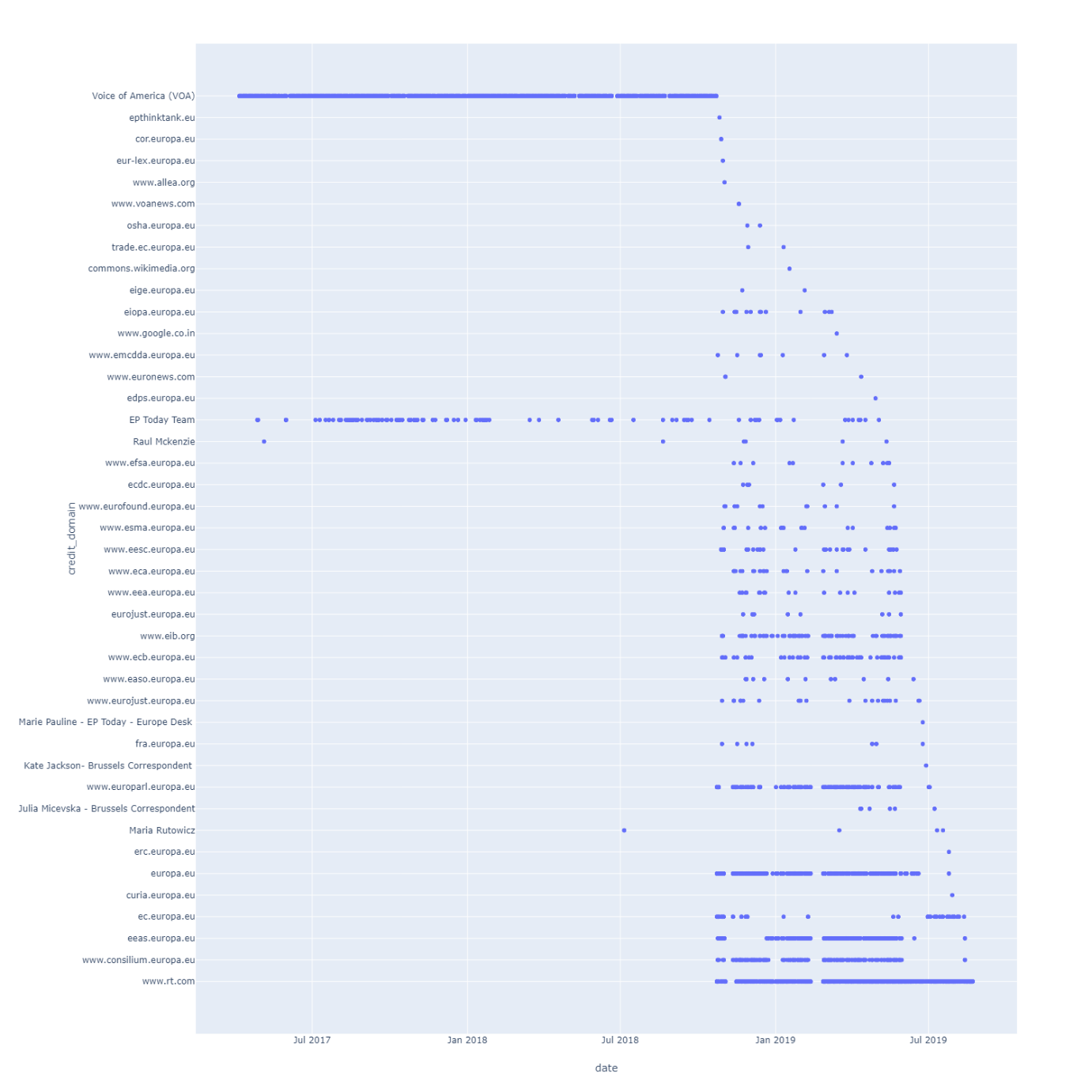

- A publicly available disinformation database: since 2016, we have collected individual examples of disinformation with links to the originals and added short debunks. By April 2020, we have more than 8,000 examples. Initially, we relied on the team members’ media monitoring and a network of sympathising volunteers who forwarded examples they had spotted to us. Later, we received funding from the European Parliament which allowed us to systematise this work with the help of professional media monitoring services. However, the final judgment, i.e. the decision whether to include an example of disinformation in the database, lies with us.

- A weekly Disinformation Review which presents the latest examples in the disinformation database in order to outline the current trends. When similar disinformation messages appear in different media outlets, and sometimes in different languages, it can be a sign that certain narratives are appearing, i.e. attempts to form and spread particular perceptions of reality among audiences using (social) media.

- If the Disinformation Review resembles a news section, we also have a feature section with articles that look deeper into specific topics and narratives over longer time. This section includes different approaches, and you will see that we use different writing styles: longer analytical pieces, interviews, a “figure of the week”, as well as articles with an entertaining twist – for example when attempts to spread disinformation have exposed themselves in an embarrassing way. Finally, while the Disinformation Review is primarily based on examples of disinformation we ourselves have detected with the help of our professional media monitors, our feature articles frequently highlight examples when investigative and fact-checking journalists have exposed disinformation. These are often articles, radio and TV productions in Russian, made by independent Russian journalists, whose important work we thereby make available in English to a wider international audience.

- We have dedicated topical sections, currently two: one about elections, which includes the online output of a campaign we ran in cooperation with colleagues in the European Parliament and at the European Commission representations in the member states to raise awareness of disinformation ahead of the European elections in 2019; and a dedicated COVID-19 section, which collects our output in response to disinformation about the coronavirus pandemic.

- We also produce videos that raise awareness of disinformation, with special attention to examples taken from Russian TV. Sometimes, the simplest response to the problem is to add English subtitles to news broadcasts or a talk shows from Russian TV. We present these videos on our Facebook page and on Twitter

https://www.facebook.com/1087491177963859/videos/488694558669038/

Watch our compilation of highlights from Russian TV in 2019.

Terminology and methodology on EUvsDisinfo

We acknowledge that the results of our work very much depend on the definitions and approaches we use. Some key notions deserve to be singled out as particularly important:

- We prefer to say pro-Kremlin disinformation because our focus is on the message. While it is well-known that the Kremlin issues guidelines for media messaging, there are also actors which operate in different degrees of dependency, loyalty, or simply inspiration from the narratives of the Russian authorities. For our understanding of this “ecosystem”, see the article, "The Strategy and the Tactics of the Pro-Kremlin Disinformation Campaign."

- Since our work is a part of the EU’s foreign policy, we focus on disinformation coming from sources that are external to the EU; and with our mandate in mind, these sources must have a clear connection to the pro-Kremlin ecosystem. The original mandate is the reason why EUvsDisinfo looks specifically at pro-Kremlin disinformation.

- We highlight examples of disinformation messages unless the context clearly states that the claim is untrue. This e.g. means that we do not include clearly labelled satire. Disinformation cases can, however, appear in the context of e.g. a televised talk show discussion where competing opinions are also made available: When the context of a clear disinformation message legitimises it as a relevant “opinion” – even if it is part of a “mix” of different points of view – we consider the case to be of relevance to our reporting.

- The EUvsDisinfo database includes “disproofs”, which explain the components that make a certain claim disinformation, i.e. verifiably false or misleading information that is created, presented and disseminated for economic gain or to intentionally deceive the public, and may cause public harm. We put the disinformation examples in context with the weekly newsletter, the Disinformation Review, and with the feature articles. Our focus on the context is due to the fact that we acknowledge the important distinction between misinformation vs. disinformation, i.e. the difference between an incorrect claim seen in isolation, and the way such a claim can be used intentionally, systematically and manipulatively to pursue political goals. Awareness raising is not only about knowing why something is not correct; it is also about understanding how the systems, in which such a claims appears, work. Searching through the database, the reader sees a timeline of how a certain disinformation message pops up the first time, and how it changes and develops. These findings give hints to researchers, journalists or other users where to look for more. For a detailed breakdown of the terminology we support, we refer you to the terminology table in this article.

From 2018: Increased support and a new mandate

Since 2015, our focus on Ukraine has remained strong; but we have also looked into other areas where pro-Kremlin disinformation has been active, including: migration; the MeToo movement; election interference; human rights; the anti-vaccination movement; the chemical attack in Salisbury; climate; conspiracy theories, and many other topics.

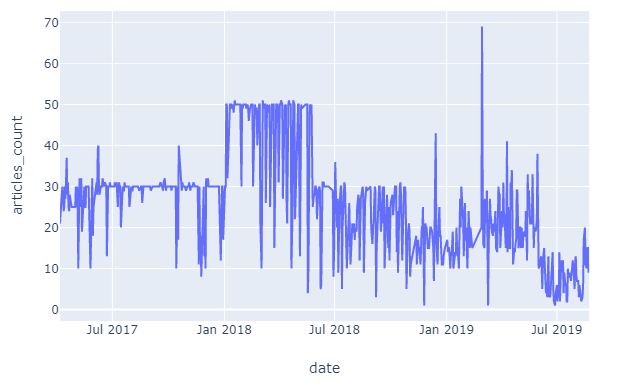

We have also seen growing interest and support of our work, including from the European Parliament. Effective of 2018, the European Parliament granted us an ear-marked budget to support our work. A part of this funding is spent on contracting a systematic media monitoring service, which replaced the initial network of volunteers. We wanted to move from presenting illustrative examples to also include a quantitative approach. As a result, we see and hear more now, and in more languages than we did in the beginning; and we have become able to identify larger tendencies thanks the access to larger bodies of data.

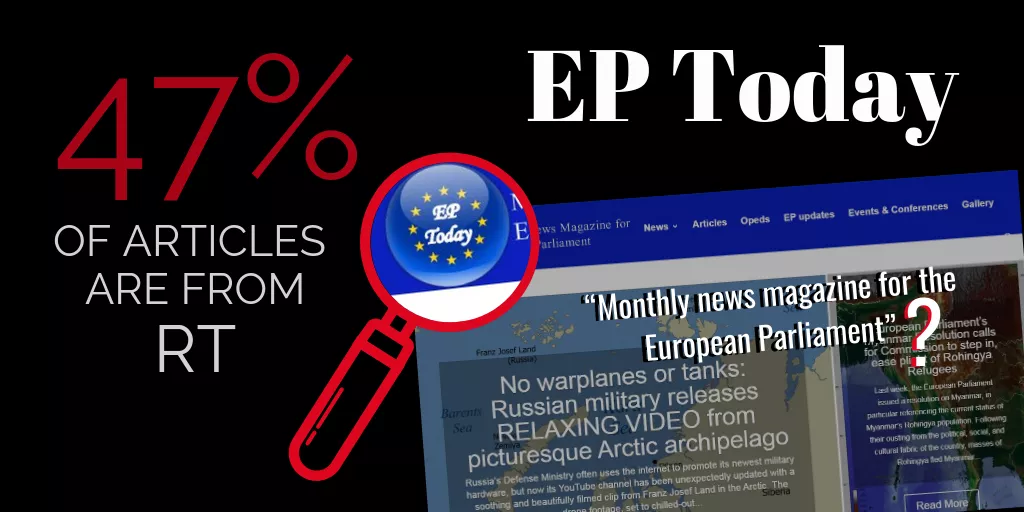

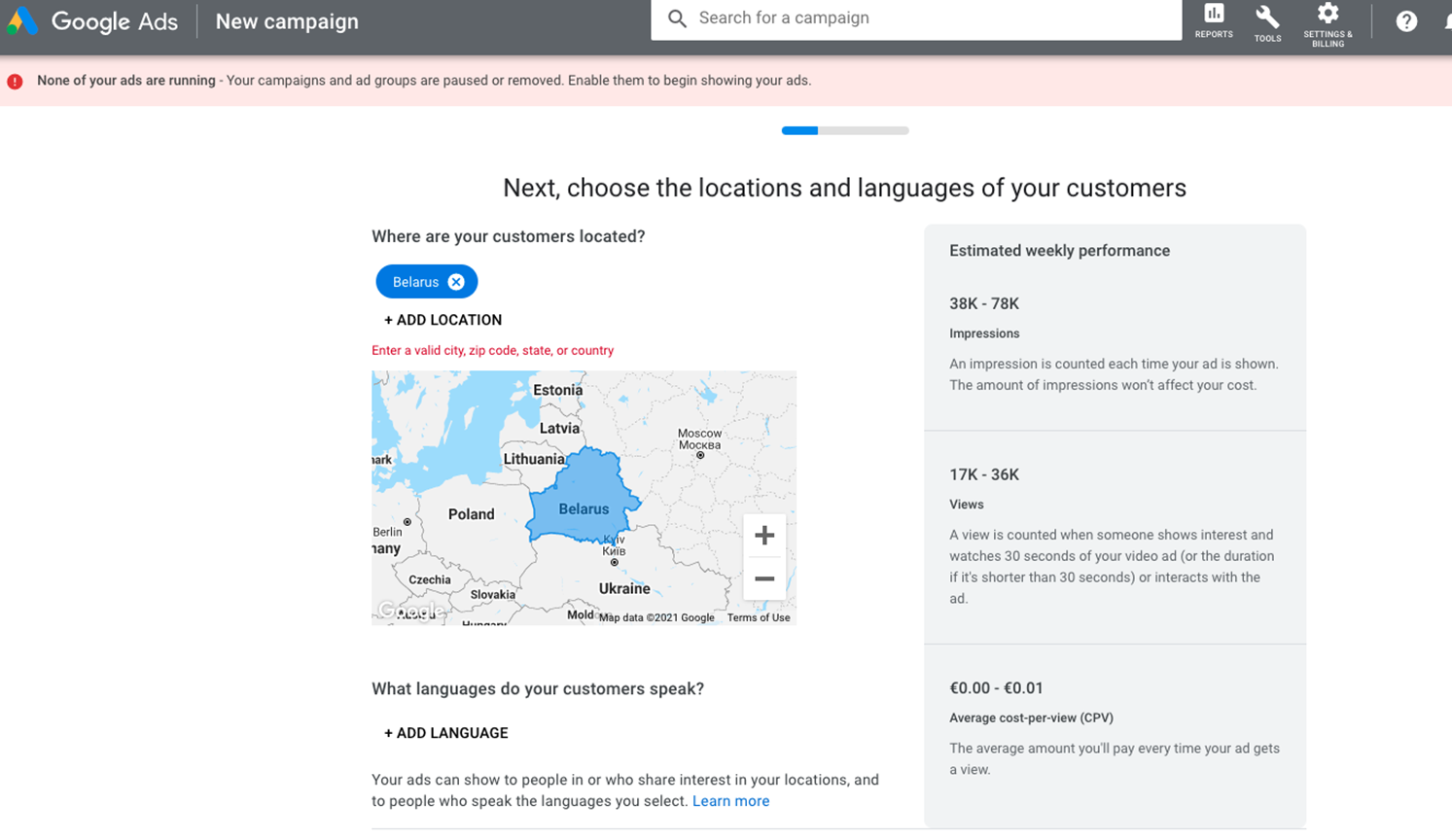

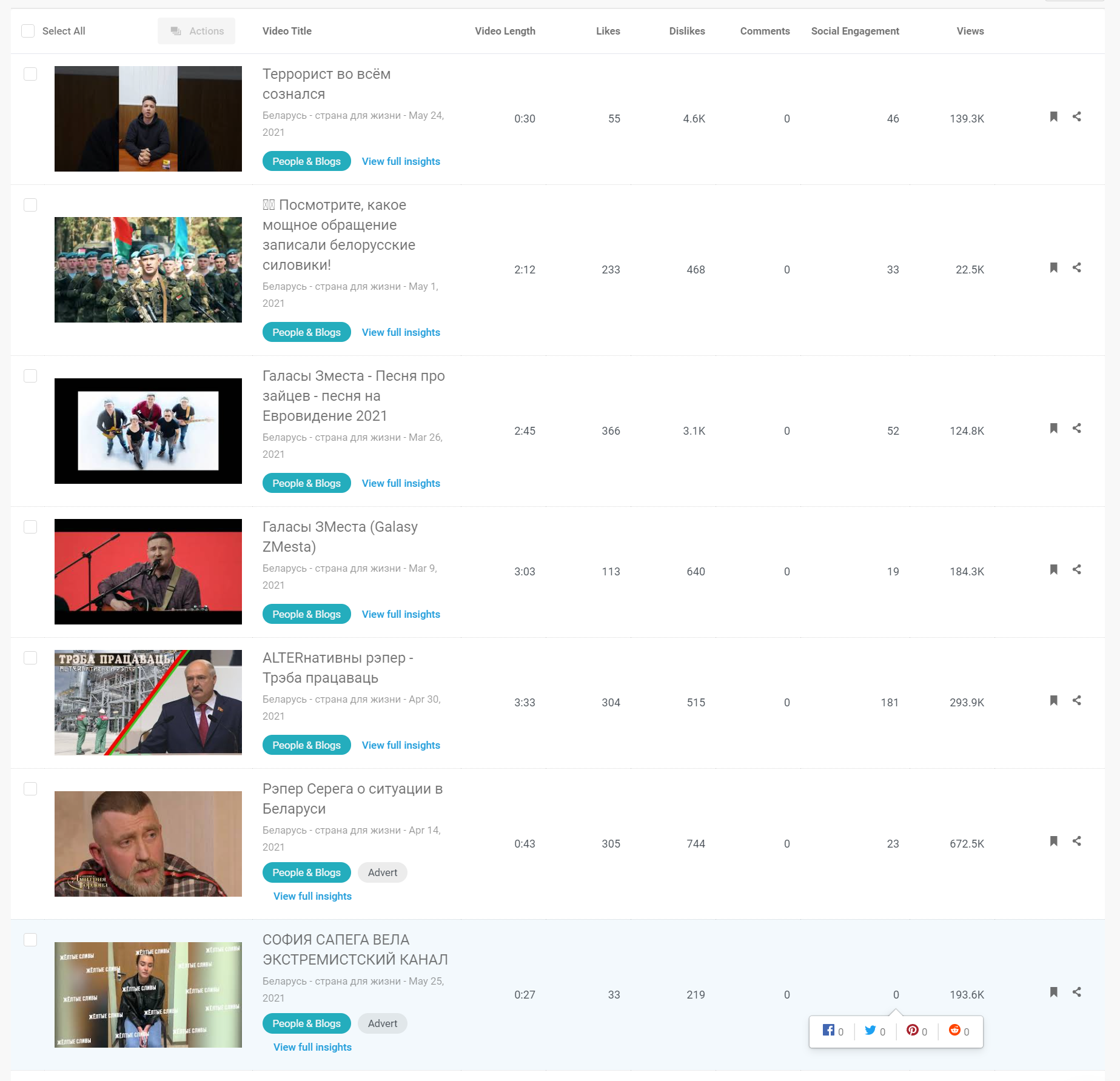

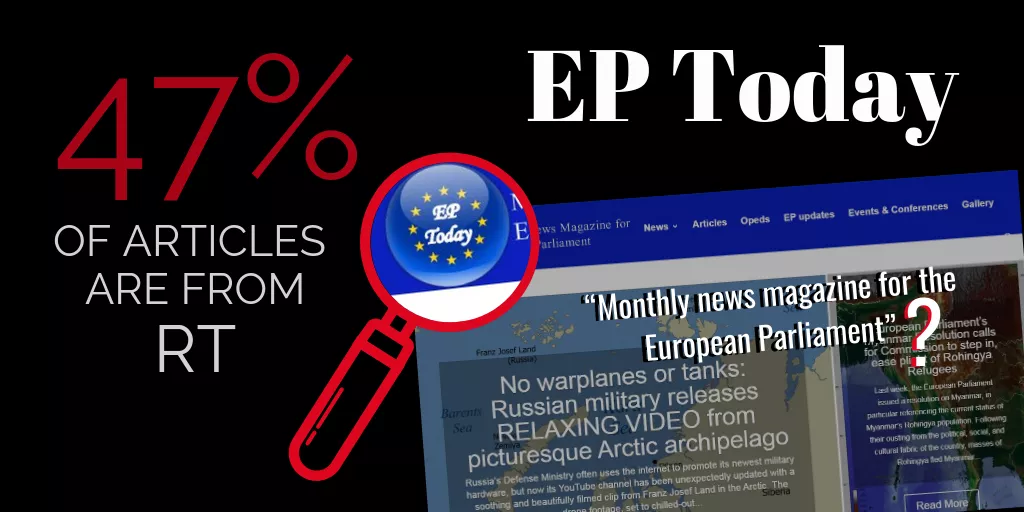

In December 2018, the European leaders gathered again in Brussels for a new discussion on disinformation. This time, they adopted an Action Plan on Disinformation, which acknowledged East Stratcom’s work; this means that our original mandate remained intact. The new Action Plan added new policies and initiatives, including a Rapid Alert System, in which EU’s member states keep each other informed internally about disinformation, and a Code of Practice, which pushes to make social media and other tech giants take more responsibility for information appearing on their platforms. The Action Plan also mentioned the important role played by our colleagues in the EEAS Task Forces for the Western Balkans and the South (the latter covering the Middle East and North Africa). The relevance of these regions is visible in articles published on the EUvsDisinfo website, e.g. about RT disinformation in Arabic and Sputnik in the Western Balkans. Disinformation operations originating from China have recently been added to our broader working environment as a topic of interest, and we have increased the capability to perform data analysis. An example of this work is an article on how a Facebook page pretending to represent the European Parliament has systematically been sharing publications from RT.

In addition to running the EUvsDisinfo online campaign, the East Stratcom Task Force has also begun to organise conferences with aim to raise awareness of disinformation and bring experts together; so far, one such event has been held in Brussels, and one has been organised in Tbilisi, Georgia. In addition, the team cooperates with different actors in the Eastern Partnership countries, including representatives of government institutions.

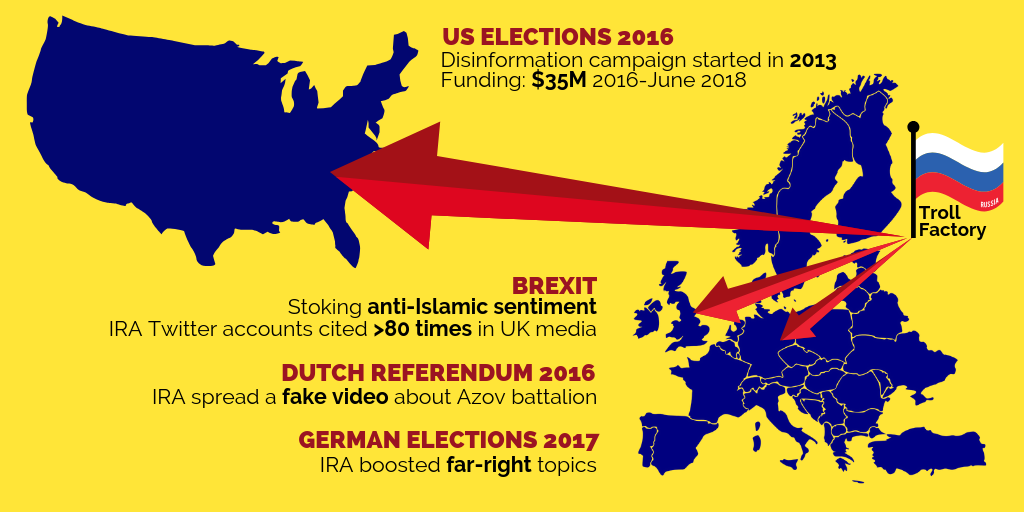

In 2018, the European leaders also stressed the particular importance of protecting elections against disinformation; in response to that, we launched an awareness raising campaign specifically ahead of the European elections in May 2019.

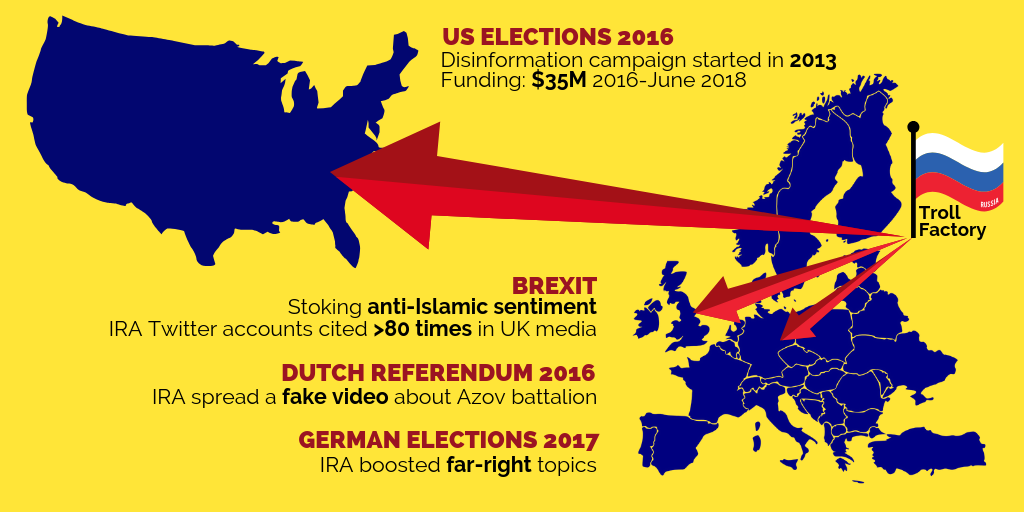

An introduction to the work of the St. Petersburg "troll factory" is among the features in our 2019 campaign to raise awareness of election interference.

An introduction to the work of the St. Petersburg "troll factory" is among the features in our 2019 campaign to raise awareness of election interference.

Finally, we acknowledge that large parts of our audience prefer to read in other languages than English. Since the very beginning, we have published and promoted Russian versions of all our articles and of the Disinformation Review; we translate select publications into German and now begin similar work in French, Italian and Spanish.

The standard products in the EUvsDisinfo output – the disinformation database, the Disinformation Review and our analytical articles – are labelled as “not an official EU position”. We find that a review of other actors’ communications should not be seen as a policy; we want our work to be considered an analytical product made available to the public by the EU.

See also:

Questions and Answers about the East StratCom Task Force

The Strategy and the Tactics of the Pro-Kremlin Disinformation Campaign

Propaganda and Disempowerment

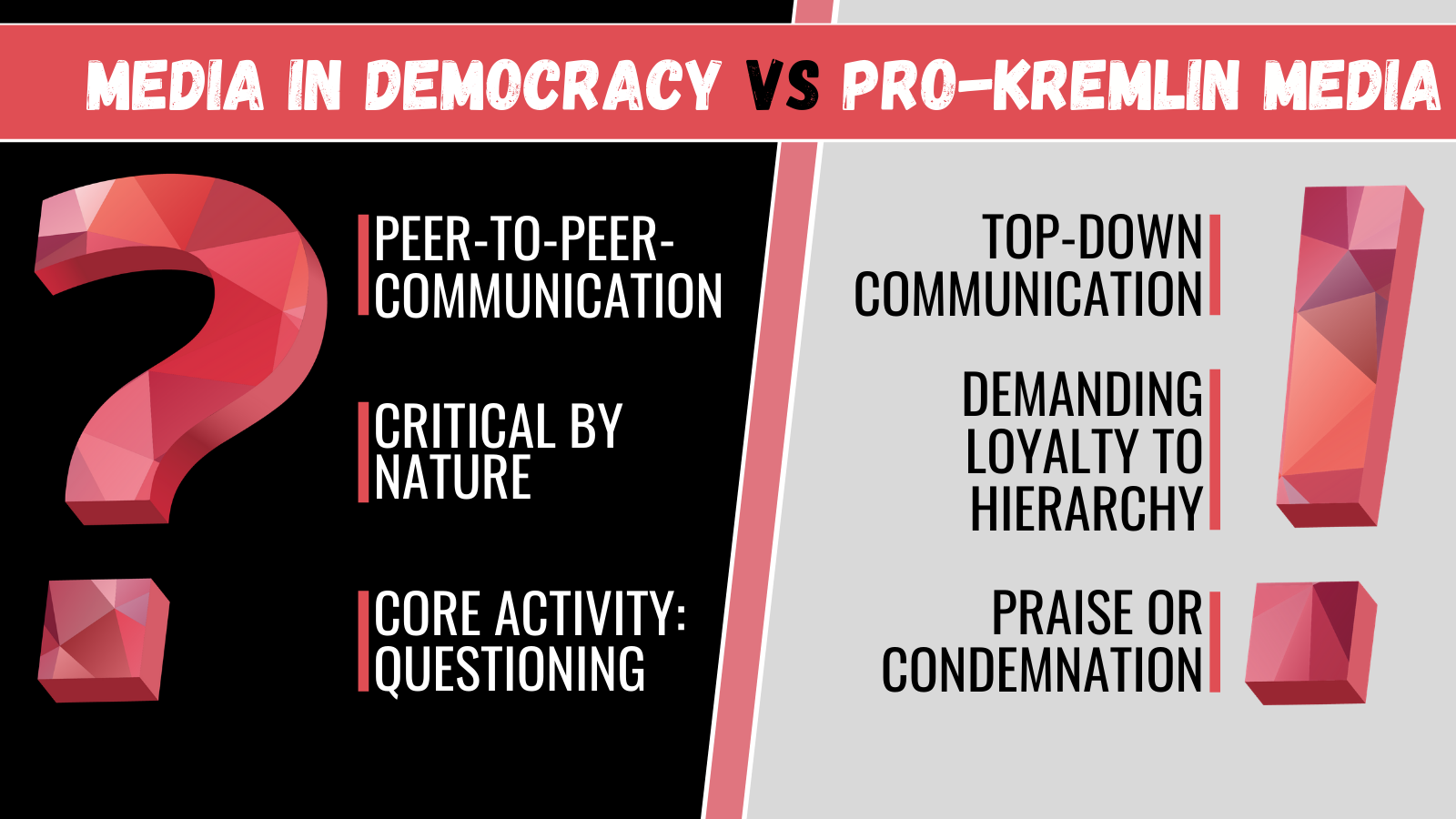

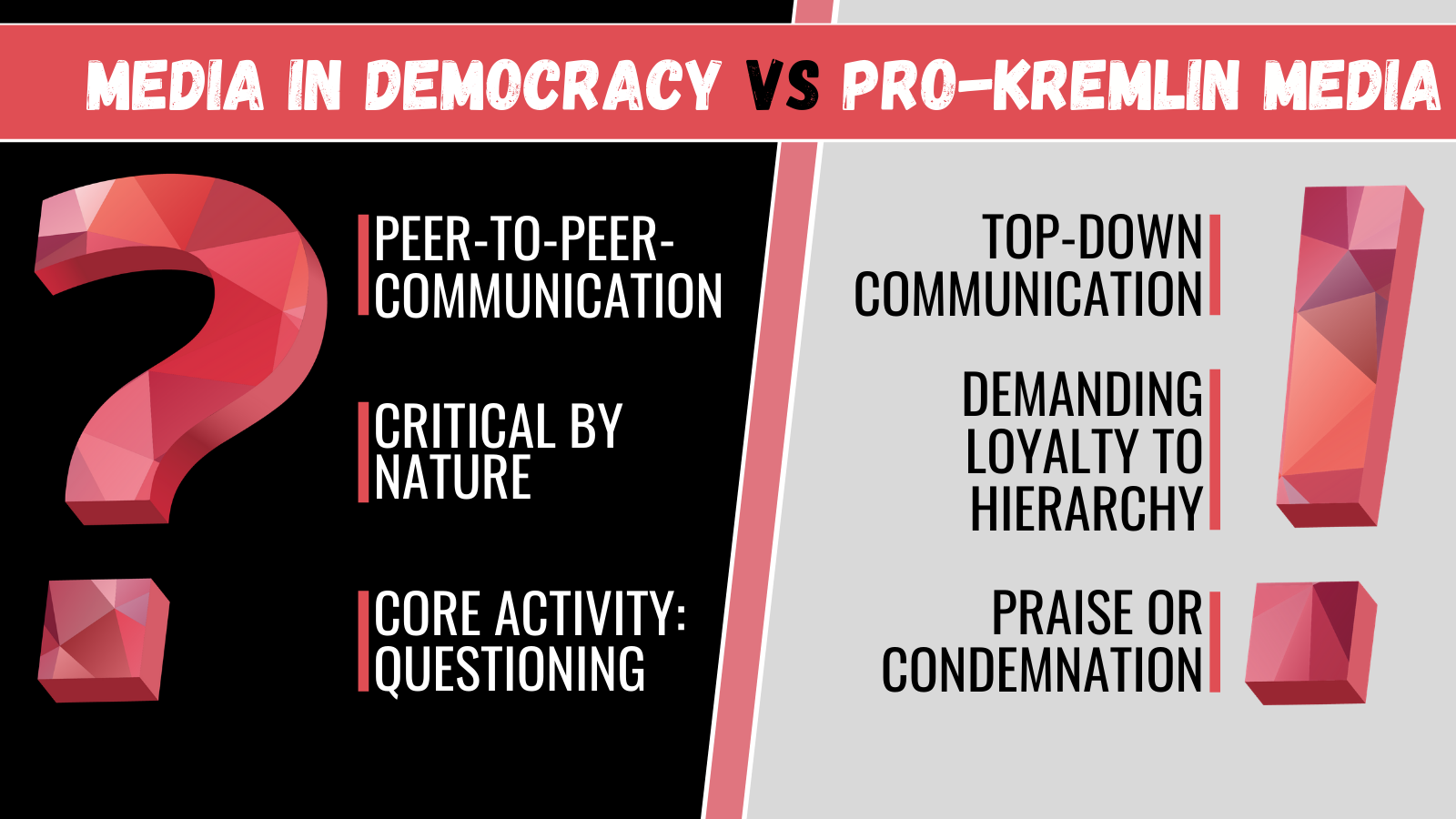

Mass media are grouped along two major concepts: Media as a Forum or media as a Tribune. This is, of course, a theoretical model to describe two ideal types of relations between the media and its audience.

The concept of the Forum is based on a horizontal exchange of ideas and views. In general, the media lends itself to a function as a space for a public discourse. The forum is not a place where decisions are being made; it is a place for debate, questioning, scrutiny, criticism. A successful forum can be loud, rough and even vulgar. It can be moderated, but never controlled.

The concept of the Tribune is first and foremost a platform for dissemination of the ideas and values of whoever is controlling the platform. It is a top-down process, where the audience is expected to passively accept the notions; to receive instructions from the rulers on how to act and what to think. The concept is based on unconditional loyalty from the audience's part.

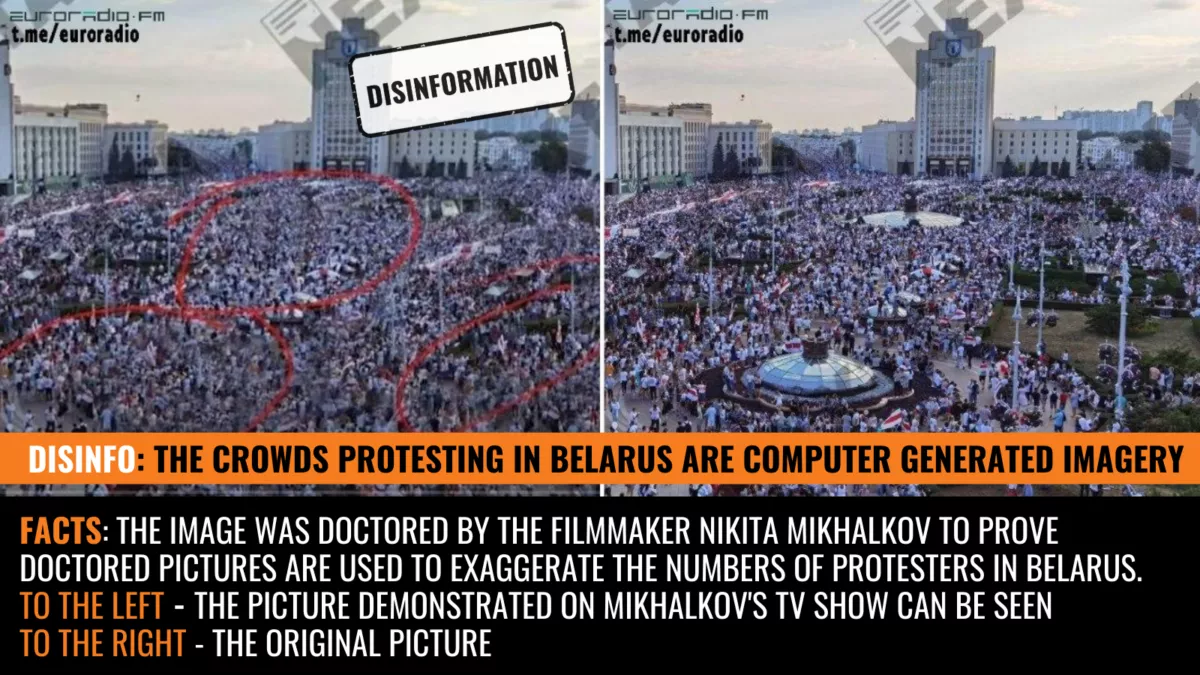

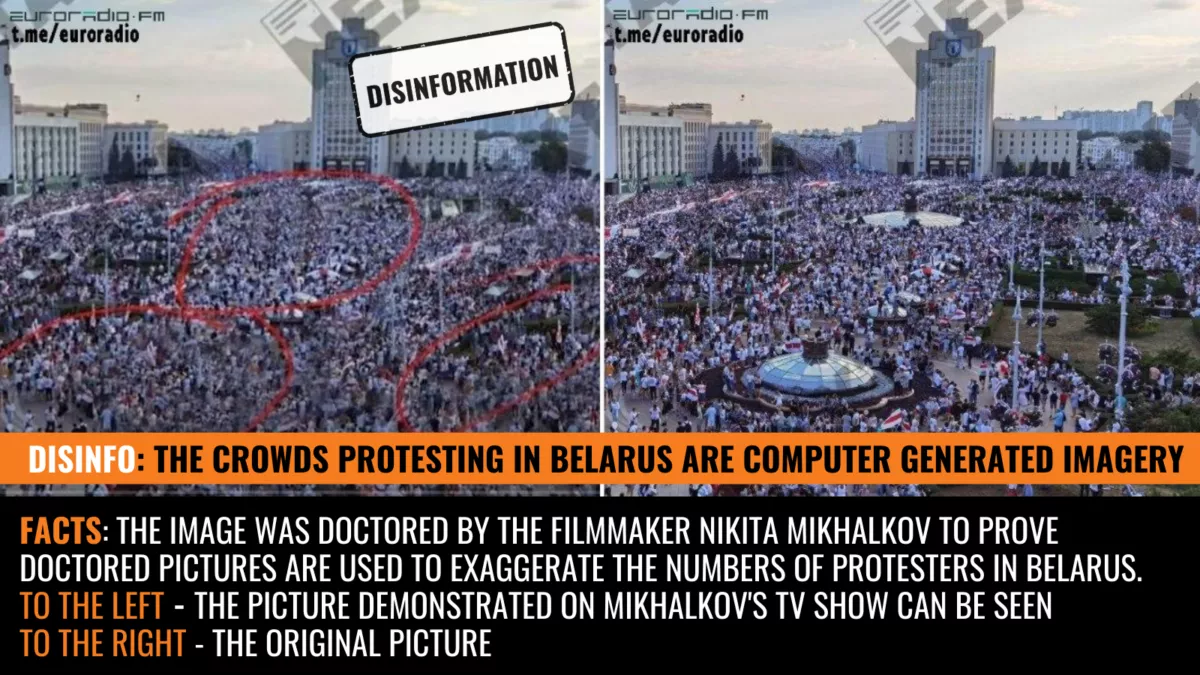

The Forum and the Tribune have different views on the concept of "fake". For the Forum, fake is information lacking a factual base. The participants in the discourse demand sources, they have a critical approach to statements. Attempts to doctor pictures, forge documents, hide details or just lie will sooner or later be brought to public attention.

For the Tribune, "fake" is anything that challenges the authority of the broadcaster. Whether or not a statement is based on fact is less important; the truth is anything that benefits the broadcaster. It is true, because the rulers say it is.

It is easy to see that most propaganda outlets have all the features of the Tribune. The media is an instrument, "The Party's Sharpest Weapon". A weapon wielded only by the powerful men in charge: their instrument. The audience is disempowered, force-fed views and thoughts.

Yet, the audience possesses a powerful tool. It can stop listening. The former Czech President, poet and dissident Václav Havel called this "The Power of the Powerless". The Tribune is based on the acceptance of a set of ideological rituals, quickly eroding, as they have never been tested in a fair contest between ideas.

Historically, Forums for public discourse have appeared in unexpected places when public debate has been forced out from the media. People have found spaces, rooms elsewhere, means of questioning, discussing, challenging authority when the media has degraded to dissemination of the Party Line.

The Forum corresponds with Democracy, just as the Tribune corresponds with Authoritarianism. The core of democracy is dissent; its method is critical thinking. The core of authoritarianism is submission; its method is disempowerment and corruption.

“Our job helps fight the Russian aggression. So we keep going.” Interview with Ukrainian fact-checkers VoxCheck

VoxCheck is an independent non-profit fact-checking project in Ukraine. In early February 2022, as the Kremlin was still massing its tanks and soldiers at the borders of Ukraine, we sent VoxCheck a couple of questions in writing about their work and pro-Kremlin disinformation in Ukraine. Before we could finalise the interview, Russia invaded Ukraine. On 2 March, the seventh day of the invasion, we received the replies to our questions and decided to publish them in their current form, as an illustration of the perseverance and resilience of the Ukrainian people.

VoxCheck continues to fight disinformation even as its work is interrupted by the howl of air raid sirens.

The language has been slightly edited for better readability.

What is VoxUkraine and what is its mission?

VoxUkraine an independent analytical platform. We help Ukraine move into the future. We focus on economics, governance, social developments and reforms. Neither parties nor oligarchs support us. The quality of our materials is ensured by the editorial process.

VoxCheck is a fact-checking unit of VoxUkraine. We monitor and verify the statements of politicians and opinion leaders which they deliver to a wider audience, for example, in interviews with leading media or on political talk shows. The other directions of our work are debunking fakes and countering Russian disinformation. The goal of our project is: less lies by politicians and more critical thinking by people.

In the current context of Russia’s military escalation, many observers point out that the Kremlin has stepped up its disinformation activities (the question was asked before the beginning of Russian invasion – EuvsDisinfo). Do you agree, and if so, what are the major disinformation narratives targeting Ukrainian audiences?

The general narrative of Russian disinformation stays the same: “Ukraine is governed by Nazis”; “Russian language and culture are supressed in Ukraine”; “Ukraine is governed by Western handlers”; “the Ukrainian army is shelling civilians in Donbas” and many others.

However, the number of misleading stories has increased with Russia’s military build-up and the beginning of the large-scale invasion. For example, there were allegations that Ukrainians had attacked and shelled some locations (while in fact that did not happen), fabricated stories of Ukrainian soldiers surrendering and high-level officials leaving Ukraine, claims about the defeat of the Armed Forces of Ukraine (the goal of these stories is to demoralise the Ukrainian army).

You may find more examples at Vox Ukraine.

Can you compare the present situation in the information environment to 2014? Have the Ukrainian people become more resilient to disinformation?

One thing to mention: there was no VoxCheck in 2014.

In our opinion, Ukrainians are more resilient than in 2014. But there is room for improvement.

According to a 2020 survey conducted by “Detector Media”, 15 per cent of Ukrainians had a low level and 33 per cent a below average level of media literacy (conversely, 52 per cent had above average or high media literacy - EUvsDisinfo).

It should be noted that nearly half (45 per cent) of the low media literacy segment were people aged 56-65 years. More than half of the segment with a below average level of media literacy had vocational secondary education.

Hence, we see two key factors affecting media literacy: education and age. Some people grew up in the former USSR where nobody had even heard about media literacy given the massive propaganda and information manipulation at the time.

In your work you focus a lot on disinformation targeting the reform process in Ukraine. Can you single out one theme that, in your opinion, it is particularly important to challenge?

The reform of the healthcare system of Ukraine, in our opinion, is an example of the most targeted reform. Since it has a lot of facets, there are a lot of stories and sub-topics to lie about.

What is the answer to the challenge of disinformation? Can disinformation be stopped, or is it an inevitable feature of a modern information environment? What can other countries learn from Ukraine?

Although some may consider it a violation of freedom of speech, we would highlight the banning of pro-Russian TV channels and websites that were spreading disinformation or acted as platforms hosting disinformation mouthpieces, as a recommended intervention to fight disinformation. If a disinformation platform is banned, it takes resources to restore it. And the multiple attempts at restoration could finally deplete the disinformation resources.

Although banning outlets from television and radio frequencies may not prevent them from using other channels (e.g., those sanctioned in Ukraine continued using social platforms Facebook, Telegram, YouTube), this administrative step may decrease their reach. In addition, it may lead to their total shutdown, as it happened with one of the TV channels sanctioned in Ukraine.

What are the biggest obstacles in your work, and what keeps you optimistic about the future?

Obstacles:

- Influx of misinformation. For the team it’s difficult to deal with all the incoming messages.

- Psychological toll.

- At the moment, there is a safety concern. Many of the members of our team are staying in Ukrainian cities where there is a risk of bombardment. So, the functioning is often interrupted by air raid alarms.

What keeps us optimistic:

- Support from the readers (they thank us and emphasise that we do an important job)

- Hope that our society will be able to think critically.

- Now, we understand as never before that our job helps fight Russian aggression. So, we keep going.

“'Information War’ is a Term Used by the Kremlin to Justify Disinformation”

Roman Dobrokhotov is a Russian journalist and the editor-in-chief of the independent outlet The Insider.

He has earned international recognition for exposing government-sponsored disinformation in Russia, often working together with international partners. Notably, The Insider’s collaborative investigation with Bellingcat into the chemical attack in Salisbury in March 2018 received this year's European Press Prize Investigative Reporting Award.

In this exclusive interview, Roman Dobrokhotov shares his reflections on the nature of the disinformation campaign in Russia. He also tells how his work has resonated inside and outside Russia and why he is not very concerned about his personal safety.

Disinformation vs. Propaganda

Q. First a question about terminology. Which term do you prefer: propaganda, fakes, disinformation? Or another term?

A. Disinformation and propaganda are two completely different things. Propaganda highlights events which are favourable to the authorities, while disinformation spreads falsehoods. As a rule, disinformation is intentional – it is deliberately spread by someone who knows that he or she is deceiving the audience.

The term “disinformation” became widespread after the Cold War, and it was taken from the Soviet lexicon. It is very much a Soviet concept, which was used not only in the context of the media, but also in the context of the work of the KGB: there were special units of the KGB which engaged professionally in disinformation – by the way, they still exist nowadays, just in a different form. There are parts of [Russia’s military intelligence agency] the GRU whose task is to officially conduct disinformation campaigns.

The 2019 European Press Prize Investigative Reporting Award was awarded to The Insider and Bellingcat for their collaborative investigation, "Unmasking the Salisbury Poisoning Suspects: A Four-Part Investigation."

The 2019 European Press Prize Investigative Reporting Award was awarded to The Insider and Bellingcat for their collaborative investigation, "Unmasking the Salisbury Poisoning Suspects: A Four-Part Investigation."

Soviet ideological heritage

Q. Do you think that the situation with disinformation is special in Russia, or can it be compared with the situation in other countries?

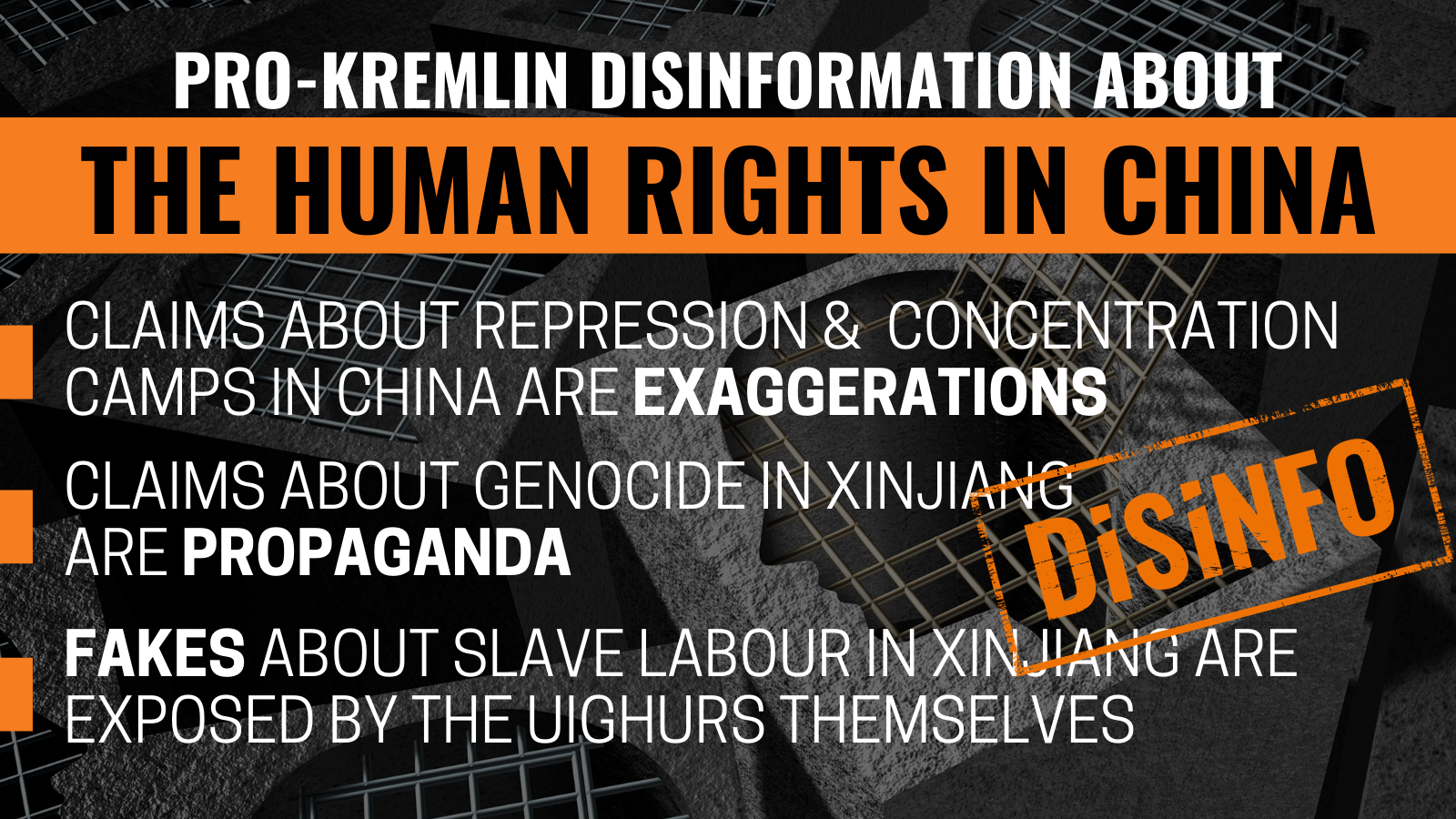

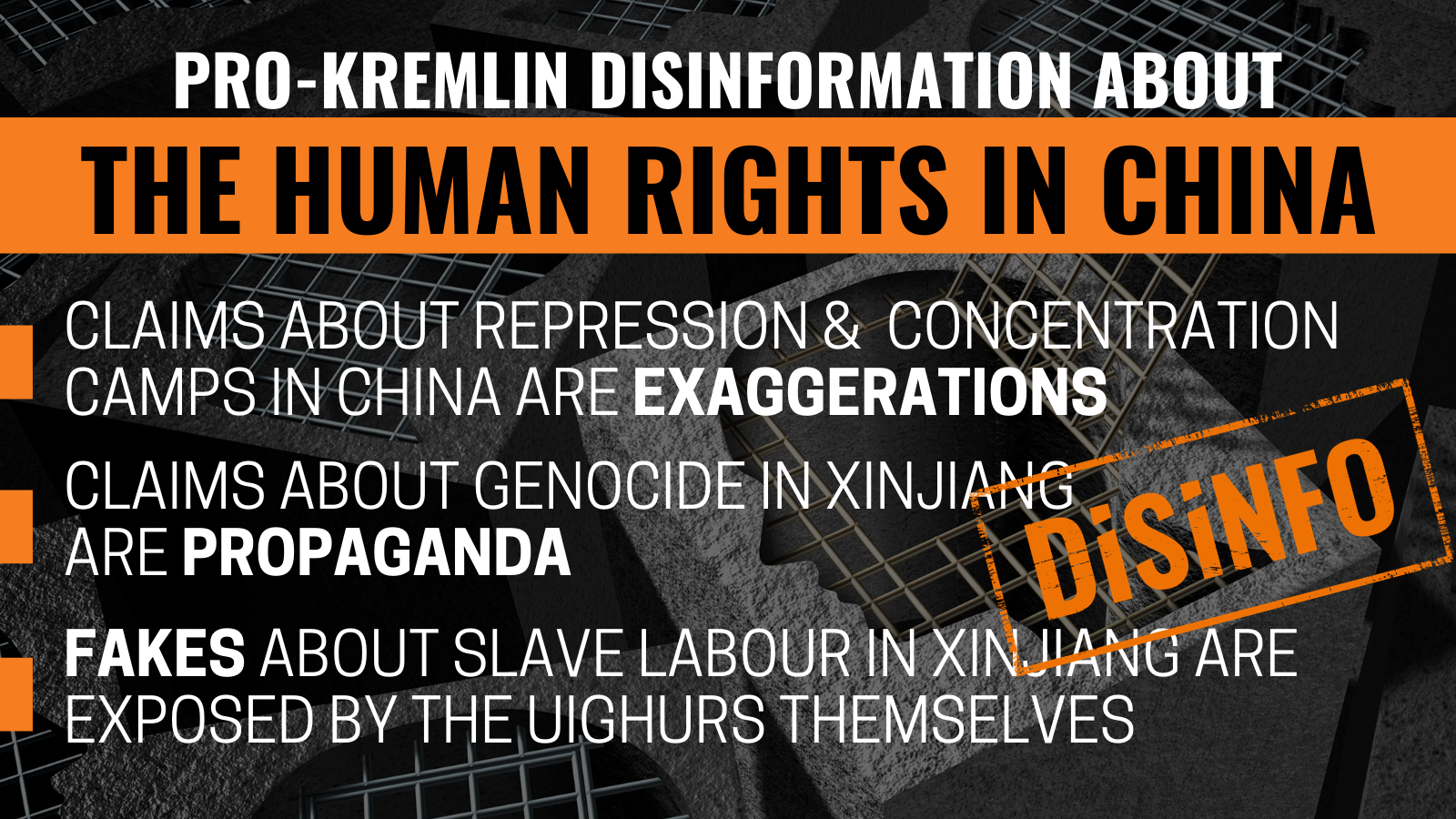

It can be compared with the situation in those countries that share the Soviet ideological heritage – like China, for example, which borrowed a lot from the USSR.

Something similar may exist in different authoritarian countries, even if they have not taken over anything from the Soviet experience. The very logic of the existence of such states in the modern information environment implies that they have to limit their citizens’ access to information, disseminate defamatory information about enemies and beneficial information about themselves.

The besieged fortress

Q. What purpose or purposes does the disinformation serve, in your opinion? If it is possible to generalise?

A. The main goal is to discredit the enemy or bring discord into the enemy camp. Disinformation is in fact a military concept: when you spread propaganda and disinformation leaflets behind enemy lines, you do it because you consider it part of an “information war”.

The Kremlin likes to talk about an “information war” in order to legitimise military terminology and thereby justify the disinformation campaigns: They say that they do not just cover current affairs, but participate in an “information war”, in which all means are good.

Again, comparing with China: even though China is a totalitarian country, there is still no feeling of a war with the West, at least not today. In China, the propaganda does not describe China’s position in the world as a state of war, it does not claim that the country is surrounded by enemies. The Chinese have a different agenda: They underline how they are different; that they have an ancient culture; that they are a big country and so on. In other words, they advertise themselves in a more positive context.

The first association with the situation in Russia will rather be North Korea, which also supports a “besieged fortress” ideology: they also like to conduct hacker attacks, disinformation campaigns and shameless propaganda.

In 2018, Roman Dobrokhotov received the Journalism as a Profession award in the Investigative Journalism category for the investigation into the Salisbury attack.

In 2018, Roman Dobrokhotov received the Journalism as a Profession award in the Investigative Journalism category for the investigation into the Salisbury attack.

International investigations

Q. You have been doing investigative journalism for a long time. Can you give an example of how you have exposed disinformation and other problems, and to what this exposure has led? Has your exposure ever had concrete consequences?

A. “The Insider” has been doing investigative reporting since 2013, so we have published a huge variety of different stories, with a wide variety of resonances.

When we have written about local issues in Russia, we know that as a result of our articles, different sorts of crooks have faced criminal charges. This happens rarely, of course, but there are such examples. These stories have usually had to do with social topics; in a case when we, for example, wrote about an orphanage, then we saw that the local authorities took care of the problems.

There are also more politically sensitive topics, but in those cases it is clear that we cannot make a big difference; if the state were to react, it would mean that it would not be an authoritarian state anymore.

In 2017, Roman Dobrokhotov and The Insider received the Council of Europe Democracy Innovation Award. Image: Council of Europe.

In 2017, Roman Dobrokhotov and The Insider received the Council of Europe Democracy Innovation Award. Image: Council of Europe.

And then there are our international investigations; obviously, they are the ones that have the greatest effect. When we cover something that happens in Western countries, the democratic governments there are more willing to react to the hype in the press, they carefully read everything that is published, and try to somehow rectify the situation.

For example, when we saw that one of the participants in the Salisbury case had also been in Poland and Bulgaria, when the businessman Gebrev was poisoned, then after that the special services of these two countries began to cooperate, exchange information and realised that we were talking about a Russian strategy, and that these were not just two random episodes.

There are many such examples. In Spain, the law enforcement agencies have also used the information that we provided on the GRU officers who traveled to Barcelona.

But the reaction is not always very strong and the one we expected; for example, in Germany, after we were able to link an assassin with the Russian special services, the investigation did not reach the political level, and they are still trying to hush up this case and investigate it as if it was just a criminal incident.

In other words, the democracies are also different and show varying degrees of courage, but we have nothing to complain about in terms of resonance, despite the fact that our media outlet is rather small. In general, when we look at the citations of our work and at the influence we have, we see that reactions are quite strong.

In the 2018 documentary Factory of Lies, Roman Dobrokhotov was one of a number of Russian journalists who told about uncovering the hidden processes of disinformation campaigns coming from Russia.

In the 2018 documentary Factory of Lies, Roman Dobrokhotov was one of a number of Russian journalists who told about uncovering the hidden processes of disinformation campaigns coming from Russia.

Competing with disinformation

Q. So do you think that journalistic investigations and exposure can pose a significant threat to disinformation? Or compete with it?

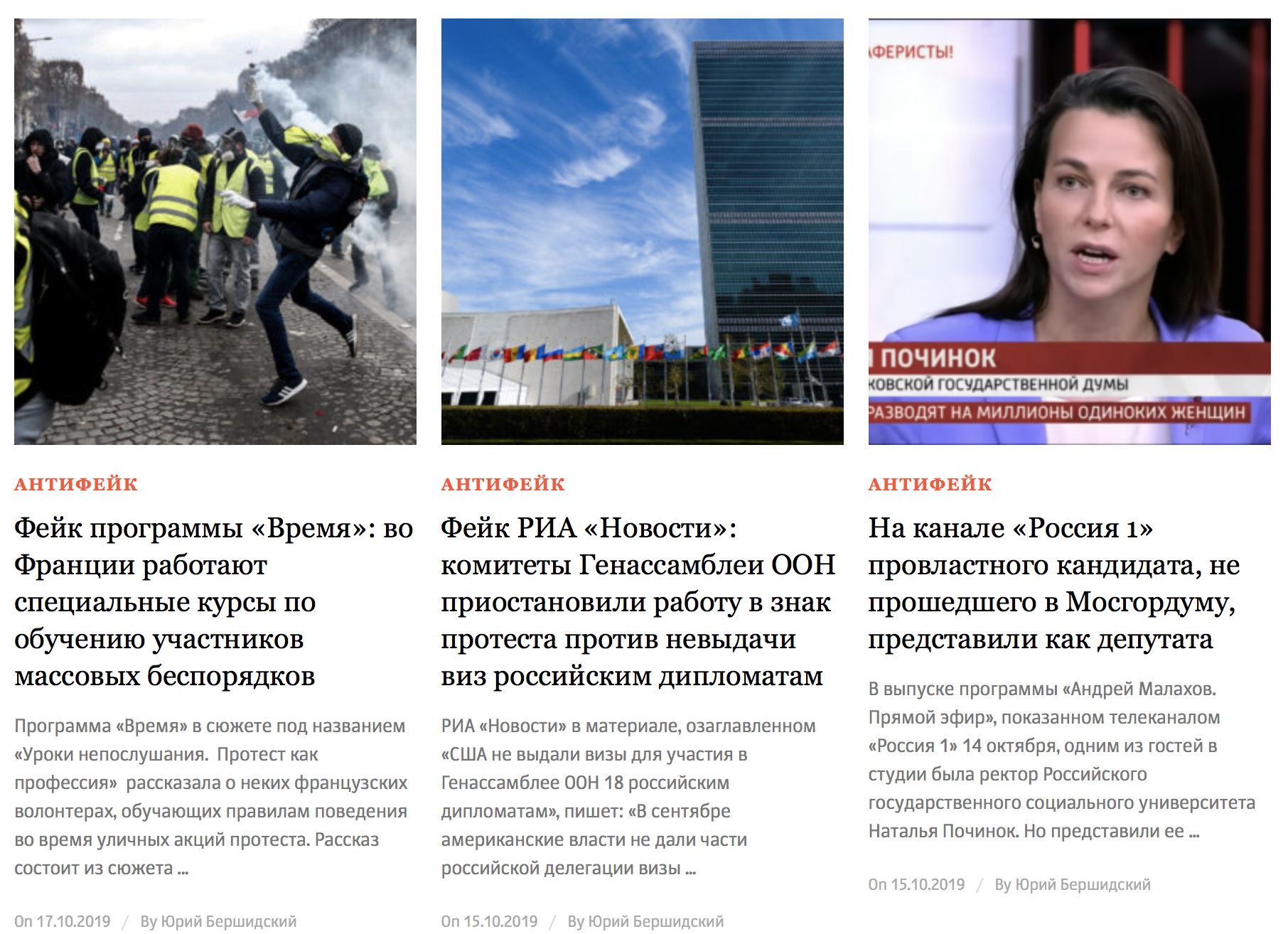

A. We have a separate section on our website called “Anti-Fake,” which is dedicated specifically to disinformation and propaganda, where we monitor and expose fakes. It aims precisely at confronting, and, if you will, competing with the propaganda.

Q. For which audience do you write, for example, about the Salisbury case? As a journalist, you probably keep in mind a specific reader – what can you say about him or her?

A. In terms of demographic criteria — gender, age, and regional location of the reader — our audience is fairly evenly distributed across Russia. The ratio of men and women, old and young people will be about the same as the average for Russia. Obviously, more people from large cities read us, but there are also more people living in large cities, so I don’t see any deviations here, and we are not trying to single out some kind of core audience and shape our texts accordingly.

An entire "Antifake" section of The Insider is devoted to analysing and debunking disinformation appearing in pro-Kremlin media. The section is updated with examples several times every week.

Of course, we understand that on average, a reader of The Insider will be a little more educated, advanced and progressive than the reader of any regular site. Why would people open The Insider if our articles made them angry? It happens by itself, we do not try to focus only on liberal circles, unlike, for example, Grani.ru, where they now refer to Russian police as “punishment forces” in their headlines, etc. – we try to be neutral in our language and the way we work.

So actually, I think that all citizens of Russia as a whole are our target audience. And if they are ideologically far from us – well, there is not much you can do about that; maybe they will read our stories and stop being ideologically far from us.

Q. What can you tell about the methods you use in exposing disinformation?

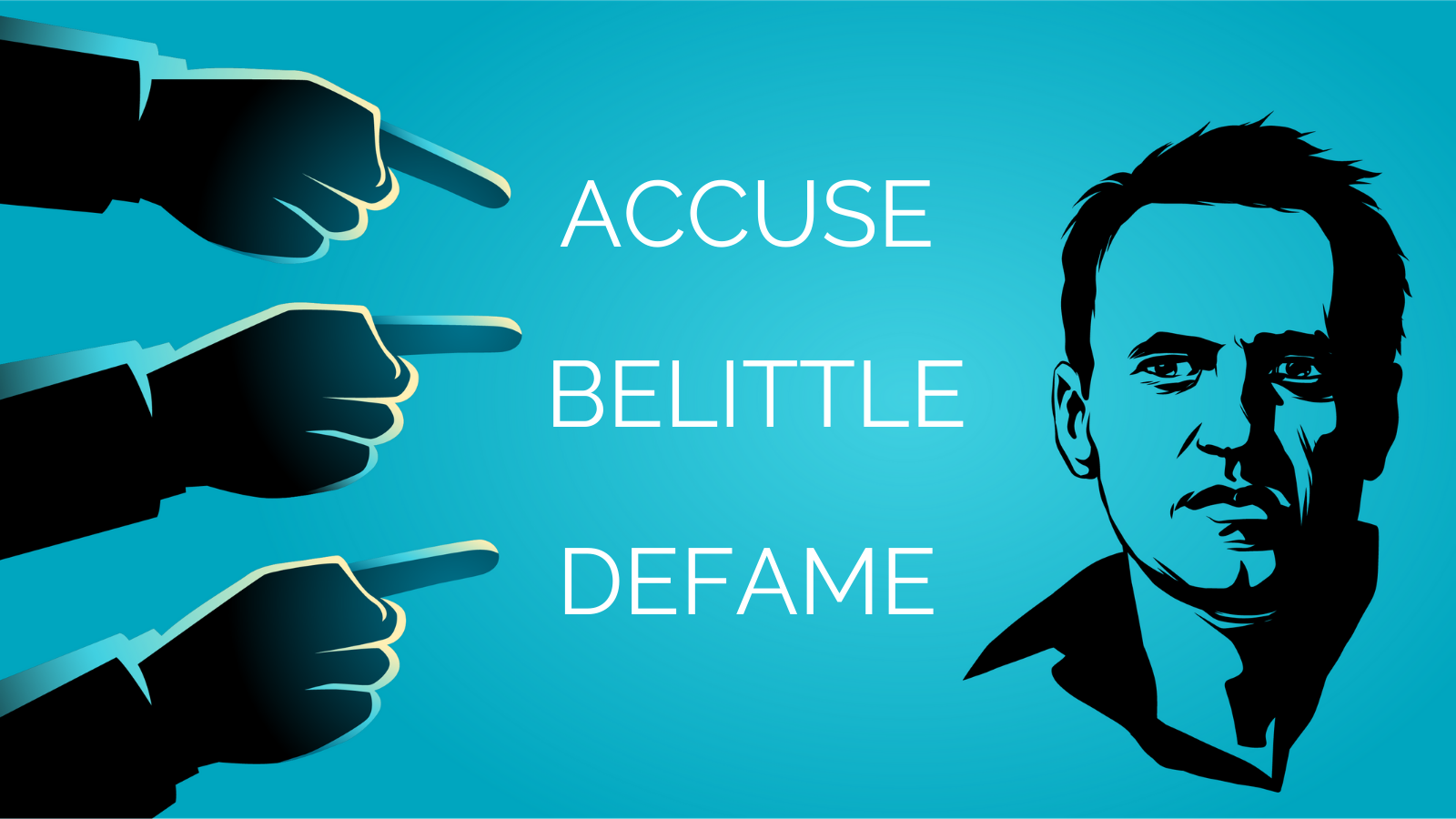

A. For example, we investigate who runs the propaganda. Tomorrow, we will publish an article about this kind of question, namely about [a consultant working for the Kremlin, Konstantin] Kostin and how the Presidential Administration organises provocations against [the opposition politician and anti-corruption activist Alexei] Navalny; that is one kind of story.

Monitoring fakes and exposing them, fact checking – that is a completely different story. These are different things, and different people are doing them at The Insider; they require completely different approaches.

https://www.youtube.com/watch?v=PnMhfIlsHjQ

Click to watch Roman Dobrokhotov explain to Associated Press how he uncovered the identity of one of the suspects in the Skripal case.

“How can you work from Russia at all?”

Q. The last question, on a more personal note, and I think you are often asked about this. Are you not afraid for your safety? Have you considered stopping your investigations, somehow changing your profile?

A. I was just on a business trip to the US where I had an average of ten meetings every day for three days in a row – and during these three days there was one question which was repeated constantly, it came in first at all meetings, and it was this: Do you fear for your safety, and how can you work from Russia at all?

I think that journalists in Russia are in a privileged position compared to activists and even members of NGOs; we do not see such mass repressions against journalists, as, for example, in Turkey, where hundreds of people are imprisoned – not to mention countries like China, Iran, Egypt.

In the Russian regions it can indeed be difficult for journalists to work; there the value of a human life is even lower. So that is where we see more murders, attacks, journalists being arrested. In Moscow, you can work more or less without being touched, so I see no reason to worry.

But everything is understood through comparison; the risks are different everywhere. It would be one thing if I lived, say, in Switzerland; then maybe I would have given more thought to the question whether it is worth undertaking some dangerous investigations or not; but we live in Russia and share the experience of having lived in the Soviet Union, the experience of our parents – I also remember it well. Compared to that period, risks are smaller now, so perhaps we shouldn’t complain, Russia is not such a totalitarian country‚ and even if the risks are big – well, sometimes you have to accept that there is a risk in what you do and that it’s a part of the job.

Other articles in our series of interviews with Russian journalists:

“Propaganda Must be Opposed by the Language of Values“: Andrei Arkhangelsky is one of Russia’s most active commentators on the topic of disinformation and propaganda.

“Propaganda Must be Opposed by the Language of Values“: Andrei Arkhangelsky is one of Russia’s most active commentators on the topic of disinformation and propaganda.

“Distracting the audience from the real problems“: Exclusive interview with Russian journalist Maria Borzunova. Every week she exposes pro-Kremlin disinformation in her programme ‘Fake News’ on the independent TV Rain.

“Distracting the audience from the real problems“: Exclusive interview with Russian journalist Maria Borzunova. Every week she exposes pro-Kremlin disinformation in her programme ‘Fake News’ on the independent TV Rain.

"The Propaganda digs a cultural ditch between Russia and Europe": Pavel Kanygin has covered the MH17 case as an investigative journalist with Novaya Gazeta. In this exclusive interview, he shares his experience in challenging the state-sponsored disinformation.

"The Propaganda digs a cultural ditch between Russia and Europe": Pavel Kanygin has covered the MH17 case as an investigative journalist with Novaya Gazeta. In this exclusive interview, he shares his experience in challenging the state-sponsored disinformation.

Top photo: Roman Dobrokhotov on Facebook

Narratives and Rhetoric of Disinformation

The Narratives section will introduce the key narratives repeatedly pushed by pro-Kremlin disinformation and the cheap rhetorical tricks that the Kremlin uses to gain the upper hand in the information space. This section also discusses the lure of conspiracy theories and finally uncovers the dangers of hate speech. We will explain all pro-Kremlin tools and tricks used to erode trust; discourage, confuse and disempower citizens; attack democratic values, institutions and countries; and incite hate and violence.

The Key Narratives in Pro-Kremlin Disinformation

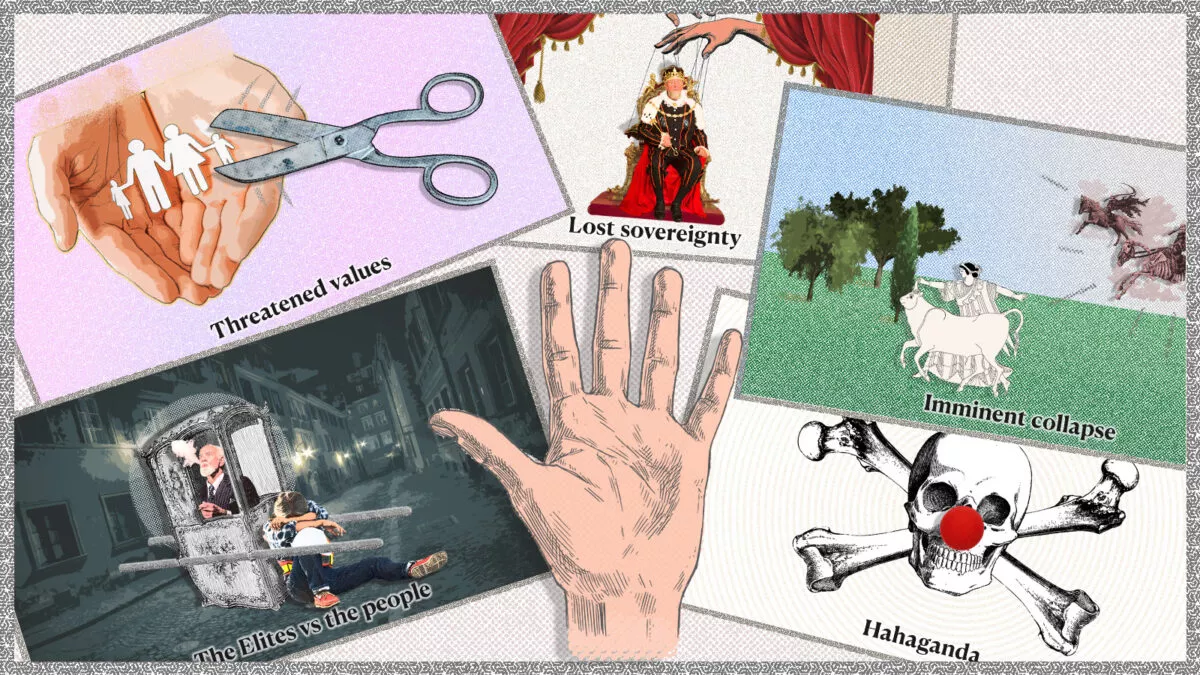

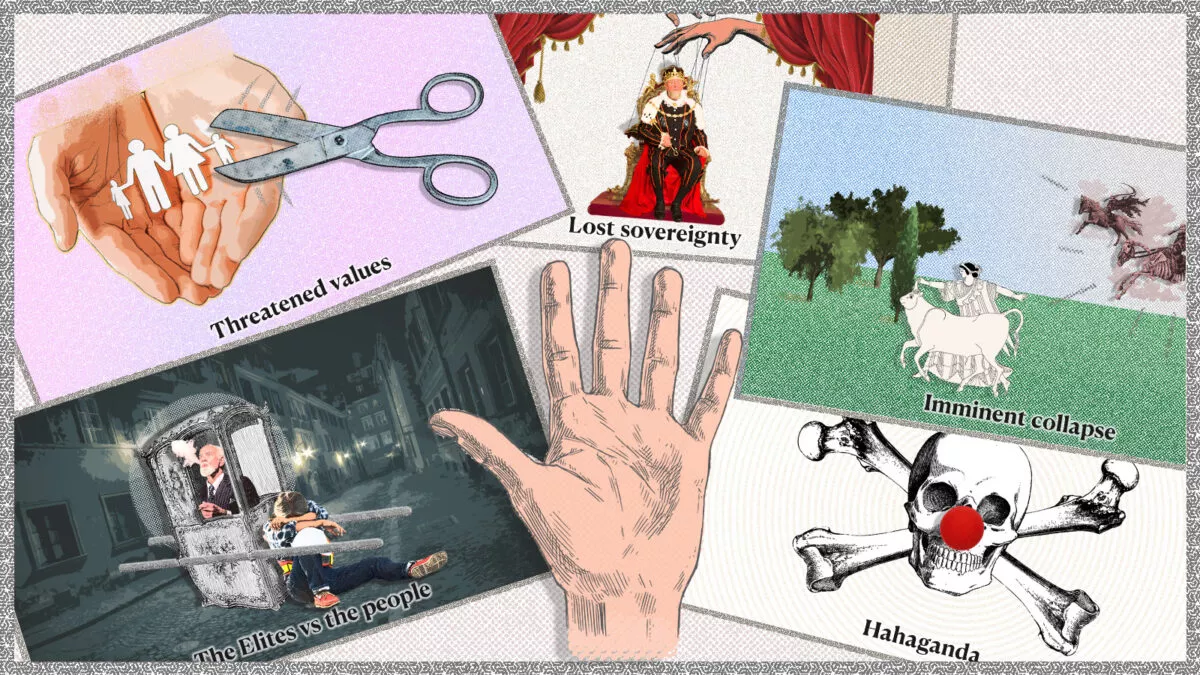

A narrative is an overall message communicated through texts, images, metaphors, and other means. Narratives help relay a message by creating suspense and making information attractive. Pro-Kremlin narratives are harmful and form a part of information manipulation. They are designed to foster distrust and a feeling of disempowerment, and thus increase polarisation and social fragmentation. Ultimately, these narratives are intended to undermine trust in democratic institutions and liberal democracy itself as a form of governing.

We have identified six major repetitive narratives that pro-Kremlin disinformation outlets use in order to undermine democracy and democratic institutions, in particular in “the West”.

These narratives are: 1) The Elites vs. People; 2) The 'Threatened Values'; 3) Lost Sovereignty; 4) The Imminent Collapse; 5) Hahaganda; and 6. Unfounded accusations of Nazism.

Rhetorical Devices as Kremlin Cheap Tricks

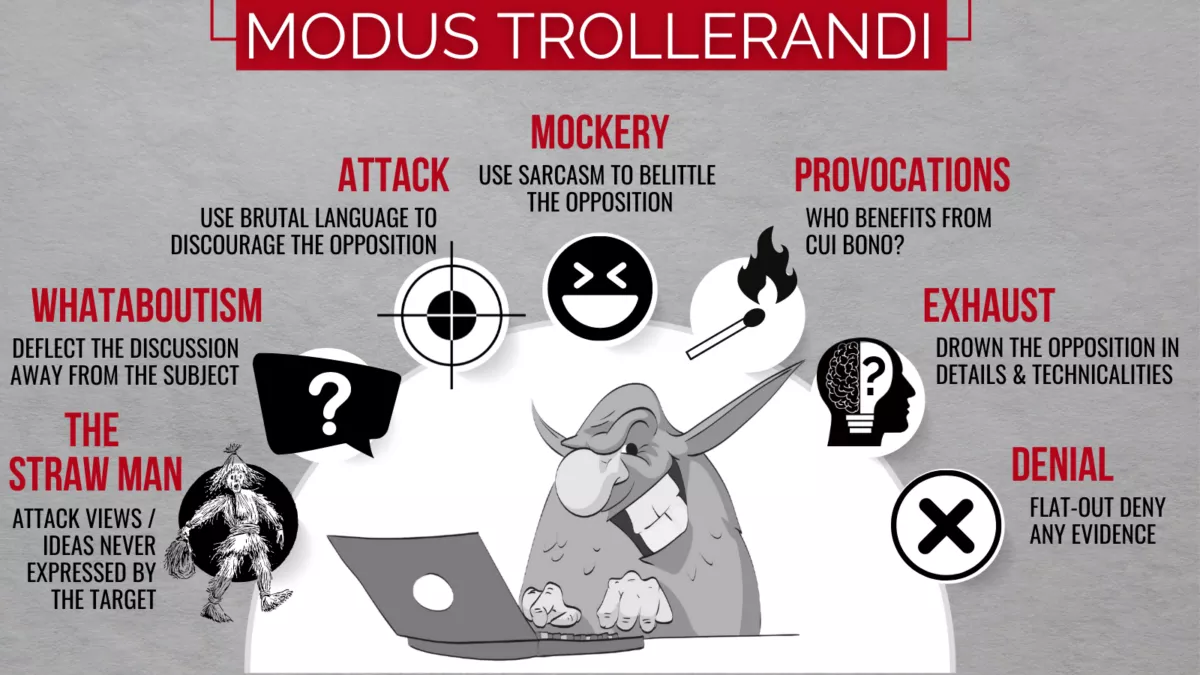

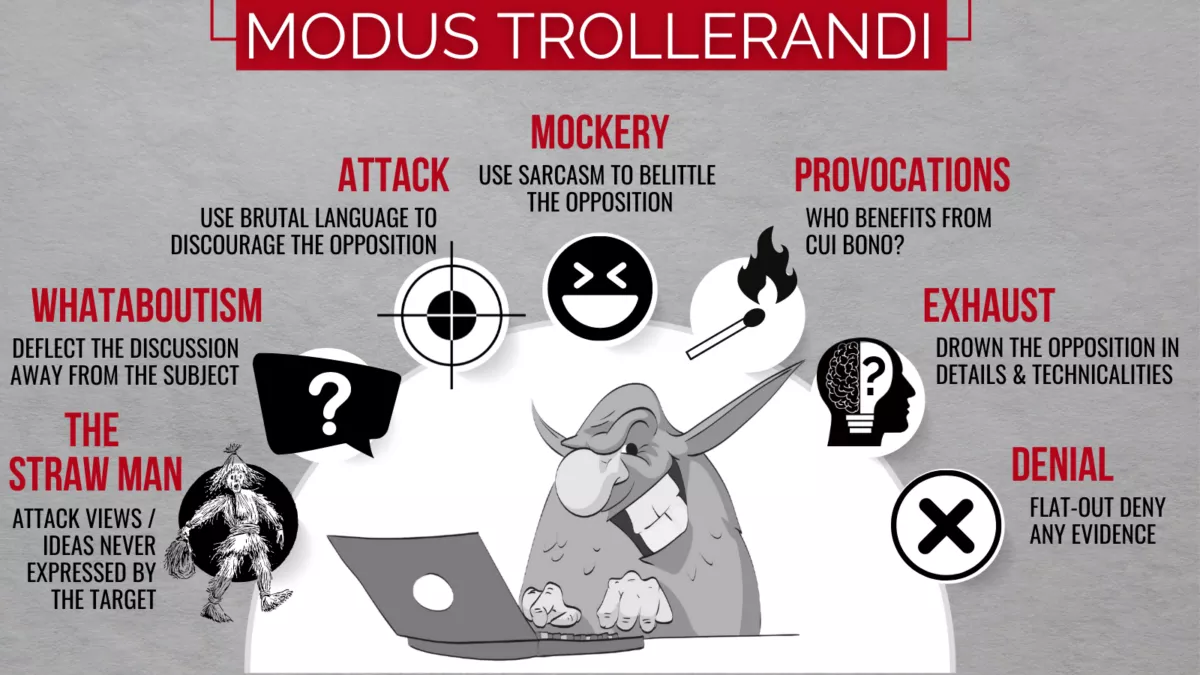

The Kremlin's cheap tricks are a series of rhetorical devices used to, among other purposes, deflect criticism, discourage debate, and discredit any opponents.

These rhetorical devices are designed to occupy the information space, create an element of uncertainty, and to exhaust any opposition. They are often used in combination with each other to create a more effective disinformation campaign.

The rhetorical devices that the pro-Kremlin outlets and on-line trolls alike use include the straw man, whataboutism, attack, mockery, provocation, exhaust, and denial.

For example, the straw man is a rhetorical device where the troll attacks views or ideas never expressed by the opponent. The Kremlin also frequently uses attack as a cheap trick to discourage the opposition from continuing the conversation. Sarcasm, mockery, and ridicule are also common Kremlin tactics to gain advantage in a debate. Finally, the Kremlin often uses denial to discredit opponents and dismiss any evidence that raises questions about Russian accountability.

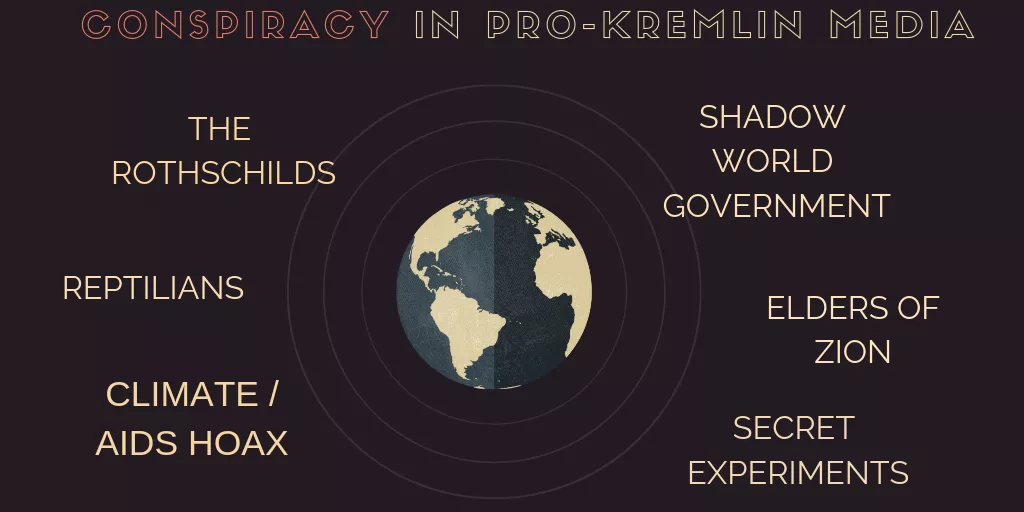

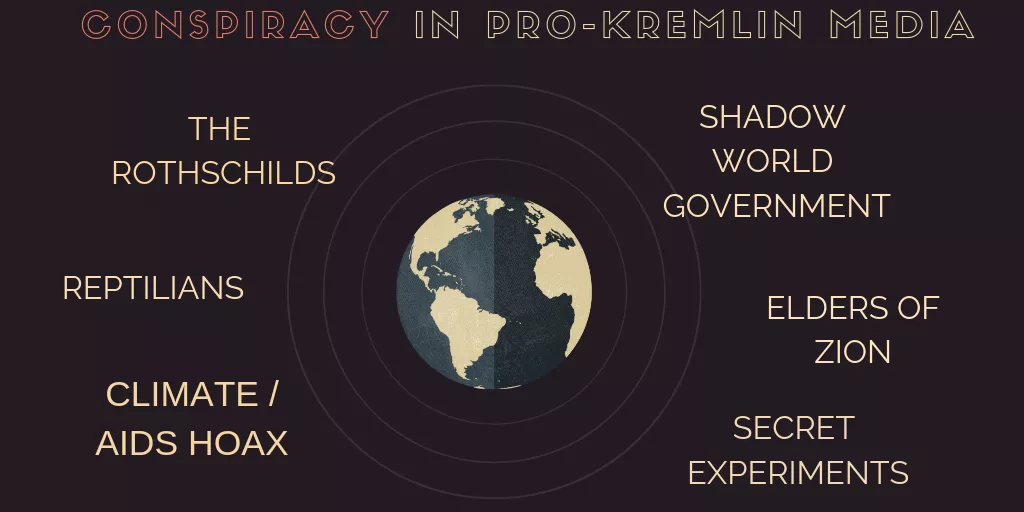

The Lure of Conspiracy Theories for Authoritarian Leaders

Conspiracy theories are not only a potent element for creating an enticing plot in thrillers, but also for propaganda purposes. One of the many conspiracy theories that has made its way on the Russian TV is the Shadow Government conspiracy theory. It is based on the belief that there is a small group of people, hiding from us, controlling the world.

From the propaganda perspective, the charm of the Shadow Government theory is that it can be filled with anything you want. Catholics, bankers, Jews, feminists, freemasons, “Big Pharma”, Muslims, the gay lobby, bureaucrats - all depending on your target audience.

The goal of the Shadow Government narrative is to question the legitimacy of democracy and our institutions. What is the point of voting if the Shadow Government already rules the world? What is the point of being elected if the Deep State resists all attempts to reform? We, as voters, citizens and human beings, are disempowered through the Shadow Government narrative. Ultimately, the narrative is designed to make us give up voting or practicing our right to express our views.

Hate Speech Is Dangerous

Hate speech is any kind of communication in speech, writing or behaviour that attacks or uses pejorative or discriminatory language with reference to a person or group on the basis of who they are. In other words, based on their religion, ethnicity or affiliation.

Hate speech is dangerous as it can lead to wide-scale human rights violations, as we have witnessed most recently in Ukraine. It can also be used to dehumanise an opponent, making them seem less than human and therefore not worthy of the same rights and treatment.

Russian leaders and media have been increasingly using genocide-inciting hate speech against Ukraine and its people since the annexation of Crimea in 2014 and with increasing intensity before the full-scale invasion in February 2022. By portraying the legitimate government in Kyiv and the wider Ukrainian population as sub-human, both the general Russian population and Russian soldiers alike are able to justify atrocities against them.

KEY NARRATIVES IN PRO-KREMLIN DISINFORMATION

A defining feature of pro-Kremlin disinformation is its repetitiveness. For all the outrageous claims they make, pro-Kremlin outlets often sound like a broken record sticking to a just a handful of basic messages for domestic and international audiences. This is not by accident or oversight, it is by design: repetition makes lies sound more believable. Pro-Kremlin disinformation outlets achieve this by sticking to a set of recurring narratives that work as templates for particular stories.

A narrative is an overall message, communicated through texts, images, metaphors, and other means. Narratives help relay a message, they create suspense and make information attractive. They can be combined and modified based on current events and prevailing attitudes. Some of them have been around for hundreds of years – variations of the narrative of the “decaying West” have been documented since the 19th century. EUvsDisinfo has identified a set of five dominating narratives used by pro-Kremlin disinformation outlets, and the key elements of Kremlin story-telling. We have seen these key pro-Kremlin disinformation narratives deployed on many occasions: in attempts of election interference, throughout the COVID-19 pandemic, in an effort to justify the unprovoked war in Ukraine.

We bring you an updated overview of the most common disinformation narratives that continue to appear in Russian and pro-Kremlin disinformation outlets.

The first key narrative in pro-Kremlin disinformation: the Elites v the People

The idea of an elite disconnected from the hard-working people runs strongly in political history. Several politicians and political movements have claimed to represent the voice of the common man, the little guy, the silent majority, against a corrupt and smug clique comprising of the representatives of political parties, corporations and the media. This narrative is not the Kremlin’s invention, but pro-Kremlin disinformation outlets exploit it frequently.

Smorgasbord of scapegoats

This narrative can be very successful, as it provides a scapegoat for the target audience to blame for any grievances: bankers, Big Corporations, Jews, oligarchs, Muslims, Brussels bureaucrats, you name it. Russian disinformation outlets heavily exploited this narrative throughout the COVID-19 pandemic, notoriously alleging that Bill Gates either invented the coronavirus, or was using vaccines against it to implant “microchips”.

The narrative is also strongly connected with various conspiracy beliefs. A common feature is a claim about the existence of secret elites: shadow rulers, puppet-masters with odious intentions. Throughout the pandemic, it has proven to be a working, efficient and comfortable template for producers of disinformation. The EUvsDisinfo website contains numerous claims on the virus being man-made and the measures to curb its spread merely the elites’ ways of destroying the lives of ordinary people.

Beyond the pandemic, this narrative was deployed on the eve of the 2016 Brexit referendum, as these two Sputnik articles demonstrate: “The Threat from Eurocracy threatens Europe” and “Waffen-EU”.

Anglo-Saxons and Ukraine

The narrative of “the Elites v the people” has also been used in the context of Russia’s invasion of Ukraine. Pro-Kremlin outlets have tried to paint Russia’s invasion as an “Anglo-Saxon” plot, pitting Slavs against each other.

In pro-Kremlin parlance, “Anglo-Saxons” is used as a catch-all term to vilify the West, and in particular the UK and the US. Anglo-Saxons are supposedly cunning and bloodthirsty, devising nefarious plots for global domination. The term is frequently used to construct conspiracy beliefs and has a “clash of civilisations” element to it, helping to frame the West as the “other” and reinforcing the idea that Russia belongs to a “different civilisation”.

Therefore, in Ukraine, we have the (Anglo-Saxon) elites vs the (Slavic) people, according to the pro-Kremlin spin-doctors: the Anglo-Saxons are seeking conflict with Russia at all costs, organised the 2014 coup d’état disguised as a democratic protest, want to involve Ukraine in war against Slavs, and are using Ukraine as an anti-Russian outpost, etc.

Lies of Reason

The Elites v the People narrative has a long, over hundred-year history. Its purveyors claim to be the voice of reason and to advocate on behalf of disenfranchised citizens, speaking truth to power against elites that seek to hide the “truth” at any cost.

The “truth” can relate to a broad variety of issues, including war and peace, migration, economy, while the particular elites deemed “guilty” of hiding the truth are strategically selected to suit the grievances of the target audience. Indeed, this narrative can be adapted and applied to a seemingly infinite number of issues: “The migration crisis is caused by big corporations in order to obtain cheap labour“; “The Global Warming Hoax is used by bankers to divert public attention from real-world problems “; “Global corporations, mainly arms manufacturers are responsible for the war in Ukraine”.

Ultimately, while this narrative appears on its surface to sympathise with ordinary people, its roots are, in fact, authoritarian. Evidence is rarely provided to substantiate the claims made and, following the principles of conspiracy thinking, the very absence of evidence is sometimes used as proof: “See how powerful the elites are, hiding all trace of their conspiracy!” Typically, this narrative also demands that the reader rely exclusively on the word of the narrator: “I know the truth, trust me!” Indeed, like all narratives based on conspiracy beliefs, this one requires its audiences to accept the claims on the basis of faith rather than fact.

Read more on the Elites vs. the People here. Read more about Anglo-Saxons here.

The second key narrative in pro-Kremlin disinformation: the ‘Threatened Values’

The narrative about ‘Threatened Values’ is adapted to a wide range of topics and typically used to challenge Western attitudes about the rights of women, ethnic and religious minorities, and LGBTQI+ groups, among others. Pro-Kremlin commentators ridicule alleged Western ‘moral decay’ or ‘depraved attitudes’. By contrast, Russia and Orthodox Christianity stand out as the true defenders of traditional values, as by this official Russian promotional video illustrates.

According to this narrative, the ‘effeminate West’ is rotting under the onslaught of decadence, feminism and ‘political correctness’ and driving down its economy, while Russia embodies traditional paternal values. This narrative is depicted in a 2015 cartoon by Russian state news agency RIA Novosti, illustrating Europe’s apparent moral decay: from Hitler, to sexual deviance, to a future of rabid hyenas.

The idea of a decaying West, juxtaposed against a Russia that is the ‘guardian’ of decency and morality emanates from the very top of the Kremlin. According to an analysis of the European Council of Foreign Relations(opens in a new tab), already back in 2013, Putin had assumed this posture, condemning ‘Euro-Atlantic’ countries for their moral decadence and immorality.

Talking about sex…

Pro-Kremlin media eagerly followed suit. Russian state broadcaster Sputnik described Western mass culture as ‘various forms of paedophilia’. Pro-Kremlin outlets operating in Arabic claimed that the West attempts to destroy basic values, such as those related to the state and the family. In Armenia, pro-Kremlin disinformation outlets alleged that the West was ‘planting’ alien moral foundations to undermine national identities of other states. In this narrative, the Kremlin not only manages to ‘preserve’ basic decency and values, but also ‘defends’ them against the onslaught of immorality from the West.

According to the European Council of Foreign Relations’ analysis(opens in a new tab):

‘One clever propaganda trick was to enhance the image of the evil West by merging together the social conservative and the anti-Western posture. In this way, the West and Westernisers, gay people, liberals, contemporary artists and their fans, those who did not treat the Russian Orthodox Church with due respect, and those who dared to doubt Russia’s unblemished historical record were all presented as one ‘indivisible evil’,’ a threat to Russia, its culture, its values, and its very national identity.’

Homophobia goes hand in hand with the claim of protecting traditional values, so it is no surprise that Russian state media spend time ridiculing, for instance, rights for sexual minorities as illustrated in these examples. Also recently, pro-Kremlin outlets have resorted to homophobic tropes in their denigration of Ukrainian service members.

Pro-Kremlin outlets show special delight when they can hit two rabbits with one stone: accusing the all-mighty EU of tyrannical behaviour by ordering individual states to abolish or destroy their own values. One example is the manipulated and severely misrepresented story, ‘European Parliament bans the words Mother and Father’.

Common Sense

Value-based disinformation narratives usually centre on threatened concepts like ‘tradition’, ‘decency’, and ‘common sense’ – terms that all have positive connotations, but are rarely clearly defined.

The narrative creates an ‘us vs. them’ framework, which suggests that those who are committed to traditional values are now threatened by those who oppose them and instead seek to establish a morally bankrupt dystopia. Russian and pro-Kremlin disinformation outlets pushed variations of this narrative in the run-up to the 2018 Swedish general election, as can be seen here(opens in a new tab) and here(opens in a new tab). In Russian-language outlets, like the infamous St. Petersburg Troll Factory News Agency RIAFAN, the language of this narrative is particularly aggressive: ‘What it is like in the country of victorious tolerance: gays and lesbians issue dictates, oppression of men and women, Russophobia and fear’.(opens in a new tab)

By contrast to the Western conception of values, which favours the individual rights of personal integrity, safety, and freedom of expression, the Russian value system entails a set of collective norms that every individual is expected to conform to.

Yet the Threatened Values narrative is always expressed from a position of perceived moral high ground in which the silent majority, committed to decency and traditionalism, is under attack from liberal ‘tyranny’. The target audience is invited to join the heroic ranks under the Kremlin banner, boldly fighting for family values, traditional Christianity, and purity.

Read more on the ‘Threatened Values’ here.

The third key narrative in pro-Kremlin disinformation: Lost Sovereignty

Russian and pro-Kremlin disinformation sources like to claim that certain countries are no longer truly sovereign. Back in 2015, a cartoonist for the Russian state news agency RIA Novosti illustrated this idea with an image:(opens in a new tab) Uncle Sam is turning up the flame on a gas stove, forcing Europeans to jump up and down while crying for sanctions against Russia.

Original illustration: RIA Novosti

Since then, there have been many more examples of this narrative proliferating in pro-Kremlin outlets: for example, Ukraine is ruled by foreigners and the Baltic states are not really countries. Pro-Kremlin disinformation outlets also claim that with their accession to NATO, Finland and Sweden are now about to lose their sovereignty and that they are acting under foreign (US, NATO) pressure. Further examples for this narrative abound: the EU is directed by Washington, Japan is a vassal state, Germany is an occupied territory, decisions in Ukraine aren’t made by its president but by the US, and so on.

Pro-Kremlin outlets even uses a specific vocabulary to define states that are ‘not-sovereign’: ‘limitrophes’, or frontier territories providing subsistence and subservience to their masters. The Polish edition of the pro-Kremlin propaganda outlet RuBaltica explains:

‘There are real states, which are capable of implementing all state functions, and then there is geopolitical scum or fictional countries that have formal attributes of statehood but which are not real states. These countries include the post-Soviet limitrophe states separating Russia from the West. The pseudo-elites of these countries are not able to respond to any serious historical challenge such as overcoming the migration crisis, protecting the border and fighting the epidemic. They keep asking Western Europe and the United States for help because they cannot cope on their own. These countries are ruled by puppets – they are able only to speak about “stopping Russia” and vote for anti-Russian resolutions in the EU and UN.’

The narrative of ‘lost sovereignty’ is ultimately a narrative of disempowerment aimed at eroding the very foundations of democracy. Why would anyone care about democratic processes and elections, if powerful outsiders rule their country?

In contrast, pro-Kremlin outlets claim, real sovereignty is possible only under Russian control.

No independent political will of the people

Closely related to the ‘lost sovereignty’ narrative are pro-Kremlin disinformation messages about so-called ‘colour revolutions’. These messages allege that social upheavals or political protests are orchestrated by powerful outsiders (the West) and never a genuine expression of citizens’ activism or grievances. The examples are many and date back to the Euromaidan protests in Ukraine in 2013-2014, when pro-Kremlin outlets falsely accused US officials of staging the popular protests.

The Kremlin’s dismissal of the independent political will and aspirations of other peoples is arrogant and often hides an imperialistic approach to the people or country in question. Failing to grasp the concept of free will, the Kremlin resorts to conspiratorial thinking – ‘Why would anyone in their right mind want to distance themselves from Russia? This can only be because of Anglo-Saxon-led manipulation.’ Not surprisingly, in the Kremlin’s view, the EU is also under Anglo-Saxon control.

Undermining the statehood of Ukraine

The narrative of ‘lost sovereignty’ aims to erode trust in and ultimately corrupt the foundations of democratic institutions. It has also gained a special significance in the attempts of pro-Kremlin outlets to justify military aggression against Ukraine. An infamous example was Putin alleging(opens in a new tab) that ‘Lenin created Ukraine’ a few days before Russia launched a full-scale invasion (a claim that had already been circulating in the pro-Kremlin disinformation ecosystem).

In the context of Ukraine, the pro-Kremlin narrative of ‘lost sovereignty’ takes on an even more sinister, imperialist hue. It denies not only Ukrainian statehood, but also its very existence by alleging that ‘a state of Ukraine has never existed before’. This narrative, along with the myth of ‘Nazi Ukraine’, has been one of the central disinformation tropes justifying Russia’s unprovoked invasion of Ukraine. Related disinformation narratives include claims that Ukrainians, Russians, and Belarusians are ‘one nation(opens in a new tab)’ and multiple allegations that Ukraine is on the verge of disintegration.

Read more about the ‘lost sovereignty’ narrative here.

The fourth key narrative in pro-Kremlin disinformation: Imminent Collapse

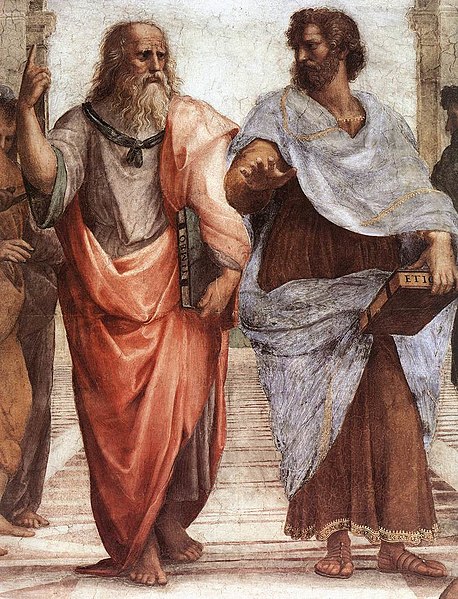

In Aristotelian rhetoric, the concept of kairos denotes a sense of urgency for action. Most speakers utilise this concept when they claim: act now, before it’s too late! In the pro-Kremlin disinformation context, the narrative of the ‘Imminent Collapse’ fulfils this function.

Russian and pro-Kremlin disinformation outlets regularly employ this narrative. Examples include: the EU is on the verge of collapse, the US is collapsing, NATO is breaking down, Ukraine’s entry into the EU would provoke the bloc’s collapse, and the financial system is collapsing.

According to the Kremlin propaganda, other factors are also hastening this alleged collapse. For example, the RIA Novosti cartoonist describes terrorism in Europe as a deadly scorpion that Europeans have unwittingly placed in their pocket.

An Old Story

Russia has been foreshadowing Europe’s imminent collapse for well over a century. Describing Europe or EU member states as ‘on the verge of civil war’ worked just as well in 2019 as it did back in 1919.

Russian state-run outlets feed their local audiences multiple stories of how life is terrible in the EU: unrest, violence, poverty, political extremism, and so forth. All to create a sense of comfort for the audience of living inside Russia, apparently not on a verge of an imminent collapse, in a clear contrast to the situation elsewhere.

The ‘imminent collapse’ is a hard-working narrative that usually resonates well with both local and international target audiences, despite the fact that there has not been a civil war in the EU, nor has Europe collapsed. By many metrics, the bloc continues to flourish.

Target audiences that – legitimately or not – already fear political and social turmoil in their countries are particularly susceptible to this narrative.

Thus, this narrative works especially well during periods of real political challenges, like during the migration crisis in the fall of 2015, during the pandemic, and now during the Russian invasion of Ukraine.

Original illustration: RIA Novosti

The Final Battle

The enormous influx of migrants to Europe certainly posed a major challenge to European governments, but Russian and pro-Kremlin outlets portrayed the situation in grossly overstated and apocalyptic terms, reporting about the crisis as though it constituted a systemic collapse. Of course, the system survived intact, but the image of a collapse lingers on in Kremlin parlance.

During the early stages of the COVID-19 outbreak, in March 2020, nationalist philosopher Aleksandr Dugin relished in witnessing the collapse(opens in a new tab) of Western democracies:

"The global capitalist society collapsed immediately. Not everyone has understood it yet. But they will very soon. This means that the very substance of Liberal Globalism has collapsed, the world of office workers and beauty bloggers, transgender persons and climate activists, human right defenders and hipsters, migrants and feminists".

The approach was also visible in Russian and pro-Kremlin coverage of the Yellow Vest protests in France. The right to express discontent with government and politics is an integral part of democracy, and the citizens of any European state enjoy the right to protest peacefully. The Yellow Vest movement and other expressions of discontent like it belong to the European democratic tradition. They are not proof of the breakdown of the system, but of its ability to reinvent and rejuvenate itself.

Despite their lack of success in prophesising, pro-Kremlin fantasising of an imminent collapse of their perceived adversaries has continued also after the Russian invasion of Ukraine. In pro-Kremlin outlets’ disinformation, the war in Ukraine is just a Western ruse to postpone the ‘inevitable collapse of global capitalism.’

The ‘imminent collapse’ narrative is also sometimes used to lament the alleged breakdown of European moral values and traditions. Russian and pro-Kremlin disinformation outlets for instance regularly describe children’s rights in Europe as an attack on family values. Their bottom line: Europe is dying by abandoning all decency and morals.

However, the reports of Europe’s death may have been greatly exaggerated. Navigating a tumultuous economic situation, wading through a global pandemic, or witnessing a heated political landscape should not be mistaken as an existential collapse.

Read more on the ‘Imminent Collapse’ here.

The fifth key narrative in pro-Kremlin disinformation: the Hahaganda

A final resort in disinformation, typically when confronted with compelling evidence or unassailable arguments, is to make a joke about the subject, or to ridicule the topic at hand.

The Skripal poisoning case is an excellent example of this strategy. Russian and pro-Kremlin disinformation outlets have continued their attempts to drown out the assassination attempt with sarcasm to turn the entire tragedy into one big joke. A similar approach has been employed in the case of the attempted assassination of Alexei Navalny, where pro-Kremlin media has competed on delivering “fun” stories on how to better kill the Russian dissident.

The methods of ‘hahaganda’ also involve the use of various derogatory words to belittle the concept of democracy, democratic procedures, and candidates.

Kremlin aide Vladislav Surkov describes the concept of democracy as “a battle of bastards(opens in a new tab)” and instead recommends the ‘enlightened rule’ of Vladimir Putin as an alternative for Europe. Former Ukrainian President Petro Poroshenko was almost constantly ridiculed(opens in a new tab) in pro-Kremlin media, as is Ukraine’s entire election process. According to Russian state media, an election with several candidates and no obvious outcome is considered a circus.

Ukraine’s sitting president Volodymyr Zelensky has also received more than his fair share of ridicule and humiliation in pro-Kremlin disinformation outlets during his time at the office. Among other ridiculous allegations, he has been claimed to get military advice from his 9-year old son, dance to the tune of the US, and allegedly also to that of Turkey. No pro-Kremlin orchestrated humiliation would be full without the mandatory Nazi and Soros affiliations.

The Kremlin Weaponising Jokes

This weaponisation of jokes and public ridicule is so favoured by the Kremlin, that the State News Agency RIA Novosti employed two pranksters, tasked with setting up fake telephone conversations with politicians, activists and decision-makers. Posing as representatives of the Alexei Navalny team, environmental activist Greta Thunberg, and most recently as Ukraine’s prime minister(opens in a new tab), the Kremlin affiliated jesters attempt to con the interlocutor into saying something politically destructive.

Of course, satire, humour, and parody are all integral components of public discourse. The right to poke fun at politicians or make jokes about bureaucrats is important to the vitality of any democracy.

It is ironic, then, that Russian and pro-Kremlin disinformation outlets often seek to disguise their anti-Western lies and deception behind a veil of satire, claiming it is within their rights of free speech. However, at the same time, they aggressively refuse to tolerate any satire that is critical of the Kremlin, or undermines its political agenda. An example of this hypocrisy is Russia’s ban on the 2018 British comedy The Death of Stalin(opens in a new tab).

Ridicule and humiliation

In their 2017 report(opens in a new tab), NATO’s StratCom Centre of Excellence explained how Russian and pro-Kremlin disinformation outlets use humour to discredit Western political leaders.

One of its authors, the Latvian scholar Solvita Denisa-Liepniece, has suggested the term ‘hahaganda’ for this particular brand of disinformation, which is based on ridiculing institutions and politicians.

The grotesque feature of hahaganda is that it is very hard to defend yourself against it. There is no point in protesting. The joke is not supposed to convey factual information. It’s a joke! Don’t you guys have humour? Do you have to be so politically correct all the time?

The goal of hahaganda is not to convince audiences of the truth of a particular joke, but rather to undermine the credibility and trustworthiness of a given target, such as an individual or an institution, via constant ridicule and humiliation. At times, hahaganda takes a truly morbid turn, when the pro-Kremlin disinformation machinery decides to turn an attempted political assassination into a laughing stock.

Read more on Hahaganda here.

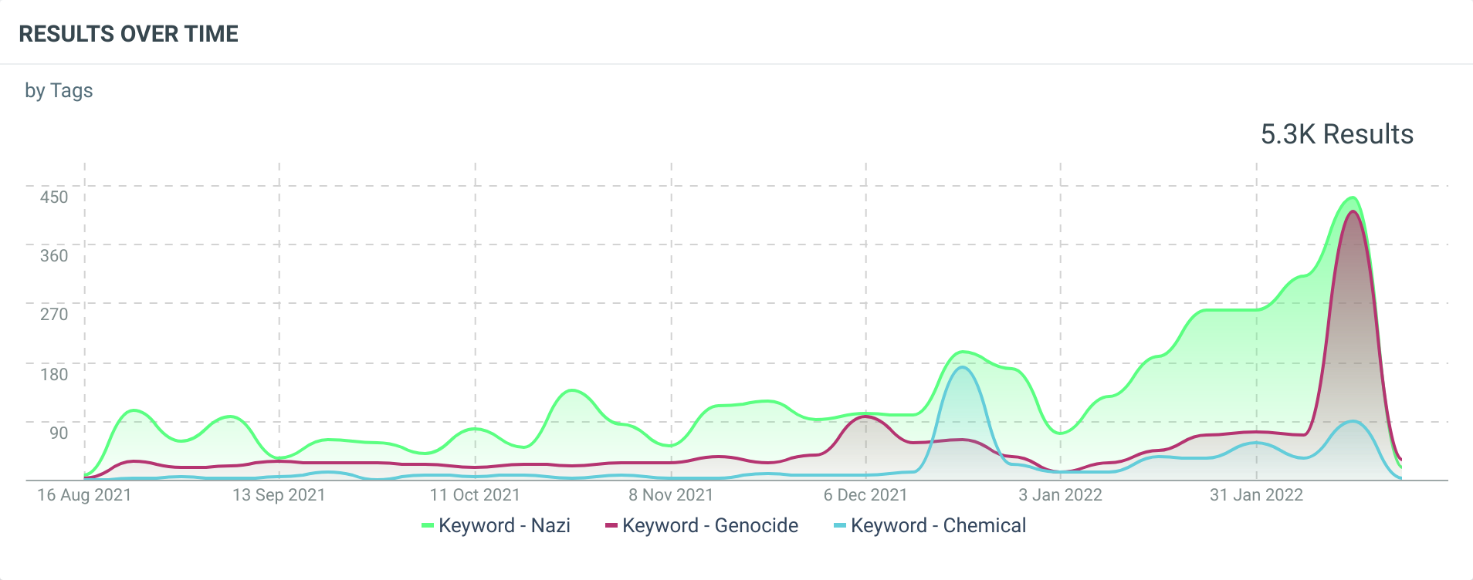

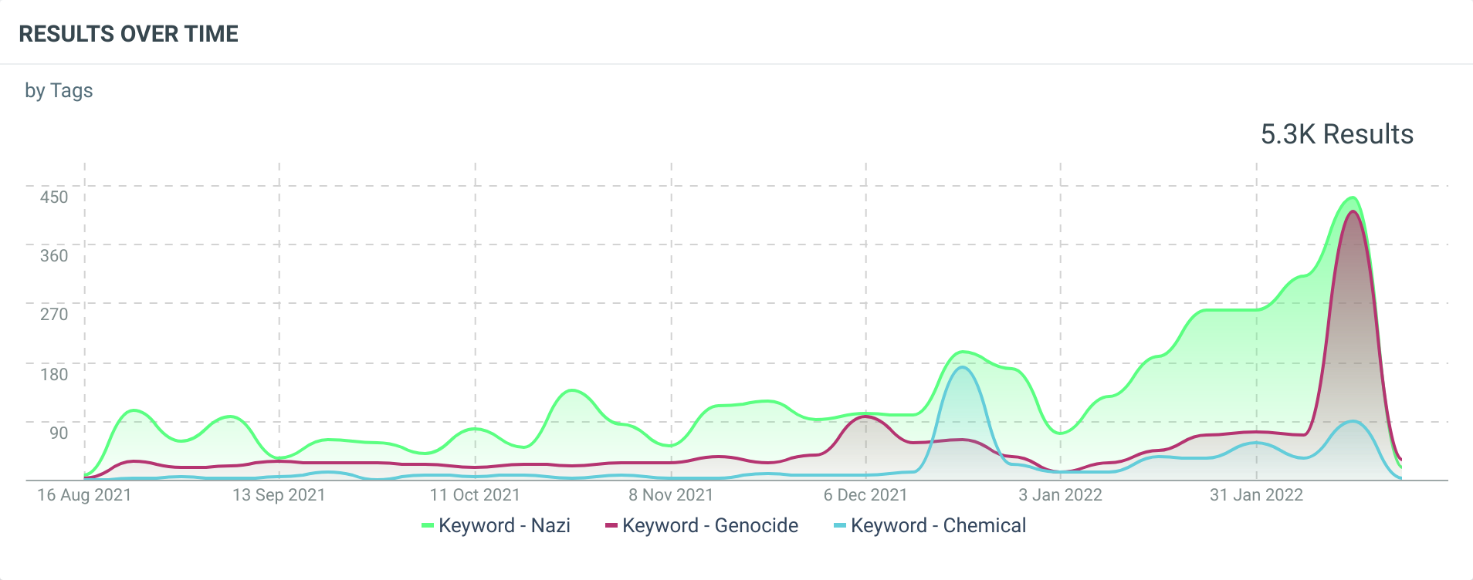

One more key narrative in pro-Kremlin disinformation: "Nazis"

Nazis East and West, but mostly in Ukraine

For many years, Russian state-controlled media have claimed that various states and entities are ruled by Nazis or permeated by Nazi ideology. In the jargon, ‘Nazi’ and ‘fascist’ have become synonyms. The examples are many and well documented in the EUvsDisinfo database since 2015: Moldova is ruled by Fascists, and so are the Baltic States and Poland. Europe is ‘supporting’ Fascism and so is the European Parliament. Speaking in Vladivostok six months into Russia’s invasion of Ukraine, Putin even speculated that the EU’s foreign policy chief, High Representative Josep Borrell, ‘would have been on the side of the fascists had he lived in the 1930s’ because the EU is supporting the fascists in Kyiv.

But for the Kremlin, Ukraine – a country where millions died fighting Nazism in WW2, where the Nazi ideology is banned(opens in a new tab), and that is currently governed by the grandson of a Holocaust survivor(opens in a new tab) – is the most ‘Nazi’ of them all. The EUvsDisinfo database contains nearly 500 examples of pro-Kremlin disinformation claims about ‘Nazi/Fascist Ukraine’. It has been a cornerstone of the Kremlin’s propaganda since the Euromaidan protests in 2013-2014, when the Kremlin sought to discredit pro-European protests in Kyiv and, subsequently, the broader pro-Western shift in Ukraine’s foreign policy as a ‘Nazi coup’.

This is because in the Kremlinverse, ‘Nazis’ and ‘Nazism’ are in no way linked to the actual history or ideology of National Socialism or fascism, nor to contemporary manifestations of far-right ideas. Rather, anyone deemed hostile to Russia or the idea of ‘Russkiy Mir’ – a geopolitical project of uniting the Russian-speaking world under the sceptre of the Kremlin – is labelled a ‘Nazi’. First and foremost – Ukraine.

Glossing over history, hiding the Nazi contacts